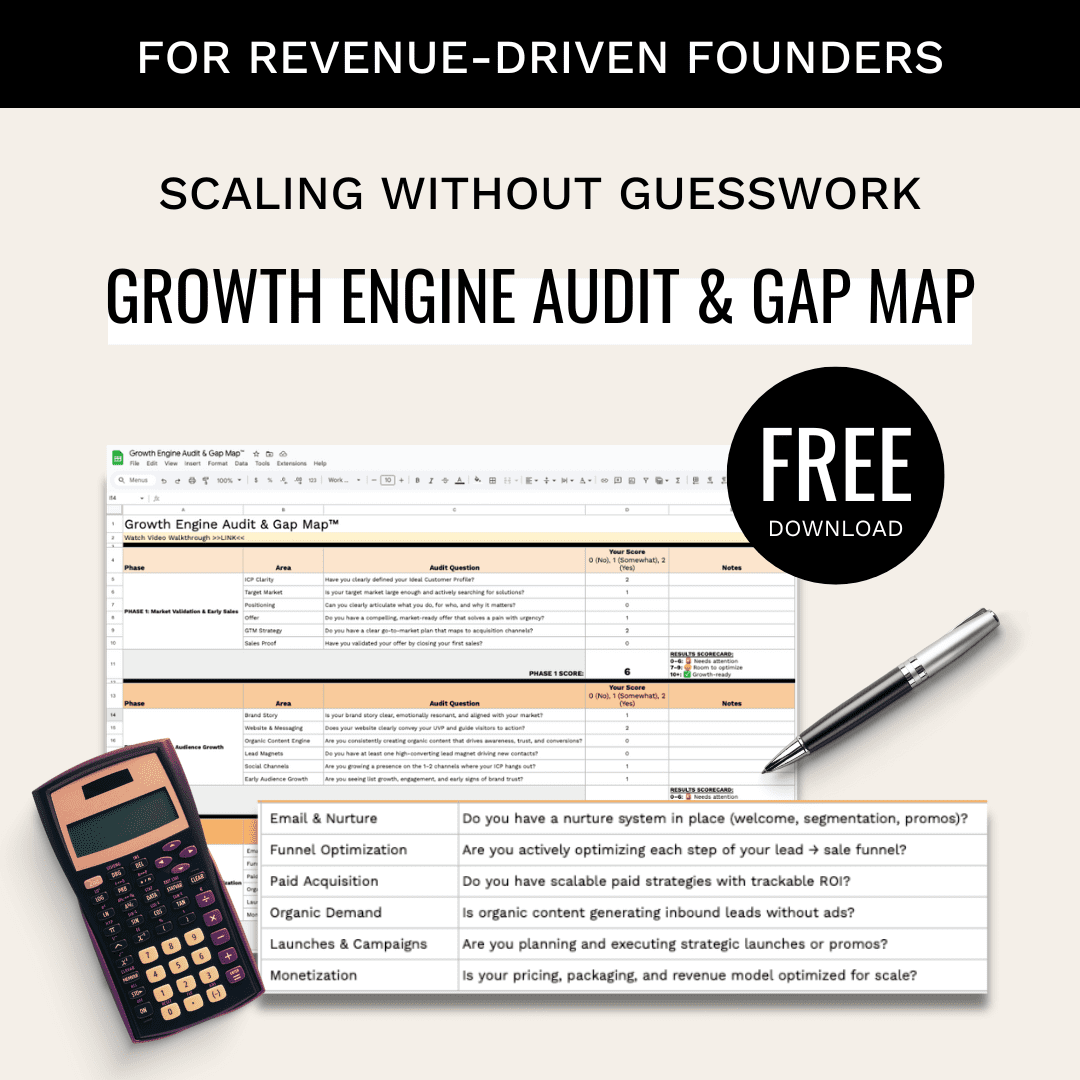

Pricing transparency, or lack thereof, can be a huge make-or-break deal with customers. But when an AI product lacks pricing transparency AND almost gets your 380k-follower LinkedIn account nuked? That’s an absurdly bad customer experience that all AI startups can and should learn from…

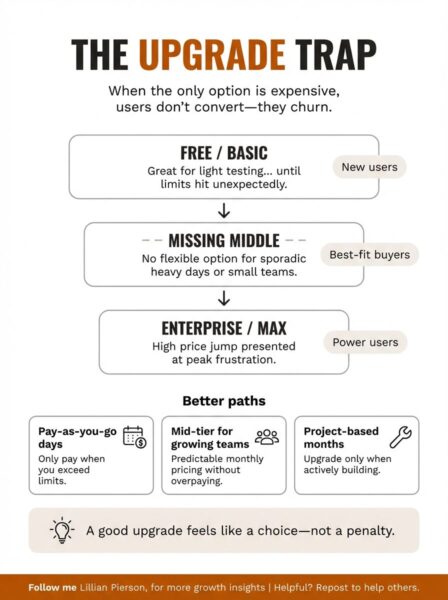

The notification appeared while I was mid-research session.

“Important notice from LinkedIn.”

My stomach dropped. With 380,000 followers and 92,000 newsletter subscribers, a LinkedIn account restriction was potentially catastrophic.

The message was direct: “We noticed activity from your account that indicates you might be using an automation tool. This can sometimes be caused by browser extensions or third-party applications that run in the background.”

The LinkedIn warning that nearly destroyed my account

Here’s what terrified me: I had no idea I’d done anything wrong.

I was using Perplexity’s Comet browser.

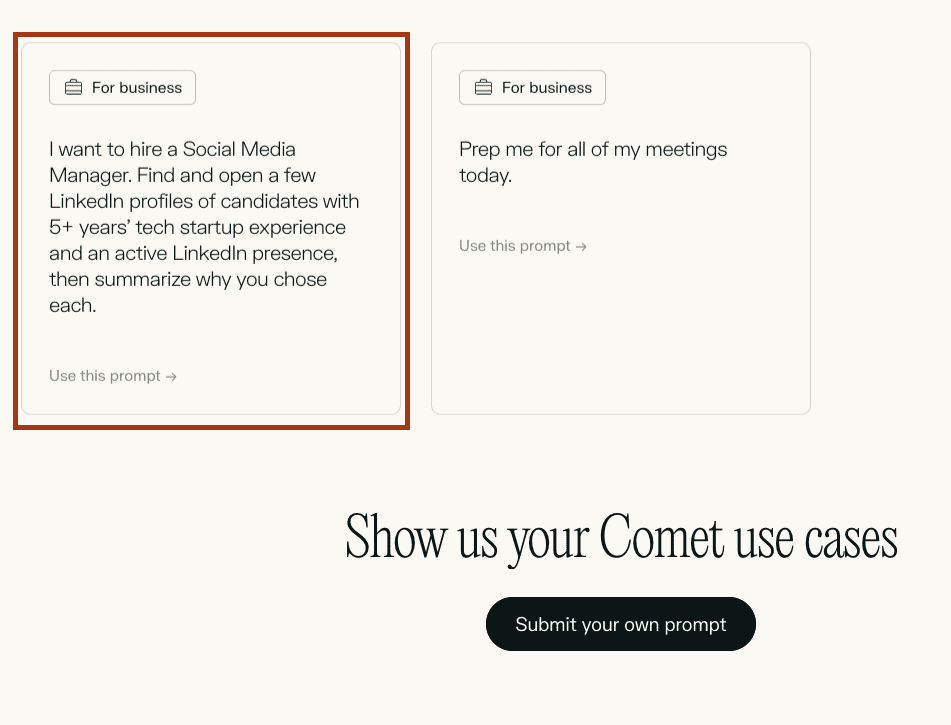

The thing that’s most unnerving is that I had no idea I’d done anything wrong. I was using Perplexity’s Comet browser for legitimate work. Their marketing literally told me to use it inside LinkedIn, as you can see below 👇

Comet’s own marketing encouraged using the product to take actions within LinkedIn!

LinkedIn’s warning was clear: “To protect our members’ privacy and help foster authentic interactions on LinkedIn, our User Agreement doesn’t allow the use of automated software. Use of these tools may lead to your account being restricted.“

A single trust violation destroyed any confidence I had in the tool.

I stopped using Comet that day. Not because the product wasn’t powerful, but because a single trust violation destroyed any confidence I had in the tool.

Oddly enough, I only started using Comet in the first place because Perplexity had reached out about wanting to do a paid partnership – I guess this what not the user feedback they were looking for, and this definitely isn’t paid.

This incident is part of a broader pattern I’m seeing across AI pricing and product design that’s quietly destroying user adoption.

And here’s the thing that keeps me up at night… This wasn’t an isolated incident. It’s part of a broader pattern I’m seeing across AI pricing and product design that’s quietly destroying user adoption.

Let me show you what I mean.

Over the past 6 months, I’ve tested dozens of AI tools – the one’s I’m covering here include Perplexity Comet, Claude, OpenArt, Gamma, ChatGPT, Bolt, and LinkedIn integrations – to understand what pricing transparency actually looks like in practice. I’ve identified 3 frameworks that separate trust-building products from churn-generating ones.

Here’s what I learned from real product teardowns.

The Invisible Limits Problem: Pricing Transparency & Why Your Users Can’t Trust You

Think AI credit systems are just a pricing decision?

They’re actually a trust signal. And most AI tools are sending the wrong message.

LinkedIn sent the warning a few days after I ran out of Comet credits (not while I was actually using Comet 🤷♀️). 36 hours before I recieved the warning from LinkedIn, I was researching summer home locations using Comet. The tool was performing beautifully.

The tool was performing beautifully. Surfacing insights, comparing markets, pulling together data I’d have spent hours finding manually.

Then suddenly: “You’ve hit your daily limit.”

A moment later, the message changed: “You’ve used all your credits for the month.”

I’d burned through my entire monthly allocation in a single day. One research session. And I had zero warning it was coming.

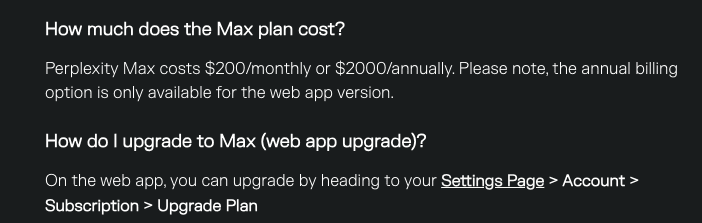

No meter showing credits depleting. No alert at 75% usage. No way to pace my work or know I was approaching a limit. The only option presented: upgrade to Perplexity Max for $200/month (billed annually).

The only option after burning through credits in one day: $200/month

Here’s what most AI founders miss: This wasn’t a conversion opportunity. It was the moment I decided to stop using the product entirely.

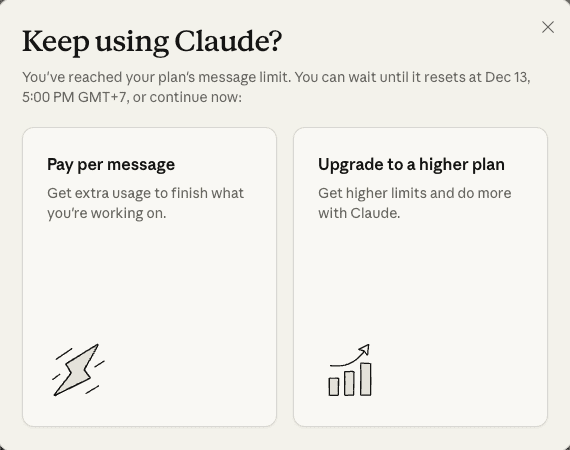

The same pattern played out with Claude. I’ve maxed out my Claude Pro credits 7 times since September. Every single time, I had no visibility into how close I was to the limit. No way to manage my usage strategically. No ability to optimize my workflow around the restrictions.

When you work less than 30 hours per week with sporadic afternoon sessions like I do, you don’t need consistently high daily limits. But you do need to know where you stand.

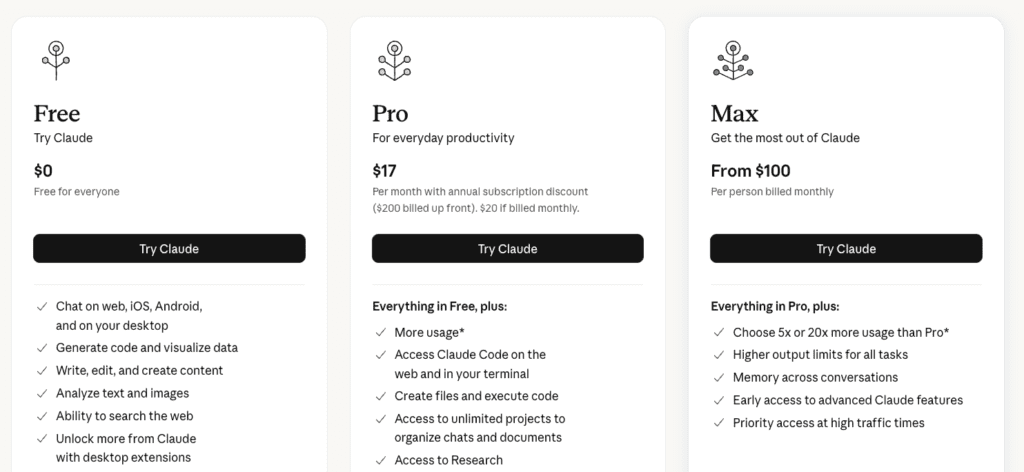

Claude recently introduced a pay-as-you-go option for daily overage, which is genuinely innovative.

Instead of forcing me into a $100/month Max plan (where I’d overpay by about $70/month for my actual usage), I can now pay only for the days I need extra capacity.

However, The core problem remains: I still can’t see my daily credit balance. I still hit limits without warning. And it still feels like the system is designed to create surprise friction at peak productivity moments rather than empower me to manage my usage.

Or put another way: it feels like they want you to run out so they can upsell you.

The Invisible Meter Problem Framework

When AI tools hide usage limits, they trigger a predictable user behavior sequence:

- Surprise friction at peak engagement → Users hit limits during their most productive or valuable work moments, creating negative associations with the product itself.

- Impossible usage optimization → Without visibility into remaining credits, users can’t pace work strategically or make informed decisions about when to use the tool versus when to wait.

- Trust erosion → Hidden limits feel manipulative rather than helpful, especially when paired with aggressive upsell prompts at the moment of friction.

- Product avoidance → Users begin rationing usage or avoiding the tool entirely to prevent surprise interruptions, reducing overall engagement and limiting their discovery of the product’s full value.

The psychological impact is profound. You’re not training users to upgrade. You’re training them to find alternatives.

The Trust Builders: How OpenArt and Gamma Get Visibility Right

Not every AI tool gets this wrong.

Every time I generate an image in OpenArt for my Convergence newsletter graphics, I check my credit balance first. I can see exactly what I have: 9,586 credits displayed in green in the top corner.

OpenArt shows my exact credit balance – no guessing, no surprises. This is good what pricing transparency looks like.

I know exactly what I’m spending: 60 credits for a high-quality nanobanana pro image.

And I make the conscious decision that it’s worth it. And honestly, it’s fun.

This feels empowering.

I’m choosing to spend credits on quality because I can see the trade-off clearly. I’m not worried about surprise limits. I’m not anxious about running out unexpectedly. The visibility creates confidence, and the confidence creates loyalty.

OpenArt costs $29/month for 12,000 credits. More than some alternatives. But the transparent credit system makes me feel in control rather than manipulated.

Gamma does something similar with their 10,000 monthly credits. When they transitioned from unlimited to a credits system, I wasn’t thrilled. But at least they show you your remaining balance as you use the tool.

Gamma’s credit visibility – another instance of spectacular pricing transpareny. I always know where I stand

Transparency alone doesn’t solve everything though.

I’ve noticed I experiment less with Gamma now. The credit anxiety crept in. Each iteration carries a cost I can see depleting in real-time. Instead of testing 5 layout variations to find the perfect format, I’ll do 2 or 3 and call it good enough.

The psychological shift from “unlimited exploration” to “managed resource” changed how I engage with the product. Less innovation discovery, more conservative usage.

The hard part is that credits systems, even transparent ones, create resource scarcity that makes users more strategic but less experimental. If your product’s differentiation depends on users pushing boundaries and discovering novel applications, visible credit depletion might protect revenue while sacrificing advocacy.

But I’d still choose OpenArt’s transparent system over Perplexity’s invisible limits any day. At least with visibility, I’m making conscious trade-offs instead of getting surprised by restrictions I didn’t know existed.

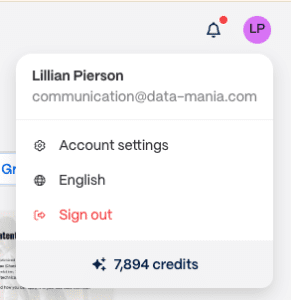

The All-or-Nothing Upgrade Trap (And How Claude Escaped It)

Here’s what might surprise you: The problem with most AI pricing isn’t the price itself. It’s the mismatch between pricing tiers and actual usage patterns. Better pricing transparency allows user to more easily identify that gap (which may be why many products actively avoid pricing transparency).

Let’s look deeper at this…

36-hours before I got the warning from LinkedIn, I hit Perplexity Comet’s monthly limit after just a single day of summer home research, the only option was $200/month. No middle tier. No flexible option. No way to pay for just the research sessions I actually needed.

For someone with sporadic usage (a few intensive days per month, not consistent daily needs), this felt absurd. The gap between “free tier that runs out in one day” and “$200/month enterprise pricing” left me in product limbo.

Compare that to Claude’s new pay-as-you-go option. Instead of forcing me into a $100/month Max plan for capacity I’d rarely use, I can pay only for the specific days I need extra credits. This matches my actual work pattern: heavy usage a few days per week, minimal usage the rest of the time.

Or look at Bolt’s approach: free tier with optional $25-50/month upgrades only for months when I’m actively building projects. Project-based pricing that matches usage patterns. Clear warnings before hitting limits. Predictable, transparent, and actually useful.

Even ChatGPT’s model works, though for different reasons. At $20/month, I never run out of credits. The limits are generous enough that I don’t need visibility because I never hit friction. Abundance eliminates the need for meter-watching.

The Usage Pattern Mismatch Framework

AI founders building pricing systems need to ask:

- Do your pricing tiers match how users actually work? Sporadic heavy users need different options than consistent moderate users. One-size pricing forces overpayment or creates surprise limits.

- Are you creating mid-tier gaps? The jump from free/basic to enterprise often leaves your best potential customers (growing startups, freelancers, small teams) with no good option.

- Does your upgrade path feel like a choice or a trap? Presenting only one expensive upgrade option at the moment of peak frustration doesn’t optimize conversion. It optimizes churn.

- Can users see what they’re paying for? If you’re charging based on usage, show the usage. If you’re setting limits, show the limits. Hidden metrics create hidden friction.

Think about your last SaaS pricing decision. Did you upgrade because the product delighted you, or because you felt trapped by artificial limits? That feeling matters.

The Hidden Liability Gap: When Your Product Breaks Other Platforms’ Rules

Let’s go back to that LinkedIn warning.

This wasn’t just a UX problem. This was a legal and professional risk transfer that I had no idea I was accepting.

Comet’s marketing showed LinkedIn recruiting use cases. Their safety documentation warned about “suspicious content” and encouraged users to “carefully review Comet actions.” But nowhere did they mention that using their browser on LinkedIn could trigger automation flags.

Comet’s safety messaging… notice what’s missing: any warning about LinkedIn automation detection

Nowhere did they warn that my 380,000-follower account could be restricted.

When AI tools market use cases that violate third-party platforms’ Terms of Service without explicit warnings, they’re transferring massive risk to users. And most users, like me, have no idea until they get the scary warning message.

This destroys trust immediately and permanently. I won’t return to Comet even after account restrictions are lifted. The near-miss was enough.

The Third-Party Risk Transfer Framework

If you’re building AI tools that interact with other platforms, here’s your checklist:

- Audit every third-party integration for ToS conflicts. Don’t just assume your use cases are safe. Actually read the platform agreements. LinkedIn explicitly prohibits automation. Discord has specific bot policies. Twitter/X has API restrictions. Know the rules.

- Explicitly warn users about risks before they take action. Not buried in documentation. Not implied in safety tips. Direct, clear warnings: “Using this feature on LinkedIn may trigger automation detection and result in account restrictions.”

- Never market use cases you haven’t verified are safe. If your product page shows LinkedIn recruiting prompts but LinkedIn prohibits automation, you’re setting users up for account risk. That’s not just bad UX. That’s negligent.

- Consider building platform-specific guardrails. If certain platforms have strict automation policies, disable those integrations or require explicit user acknowledgment of risk before proceeding.

Here’s what most founders miss: When users face account restrictions because of your product, they don’t just stop using your tool. They actively warn others. They write angry reviews. They become anti-advocates.

The reputational damage far exceeds any revenue you’d have gained from that user.

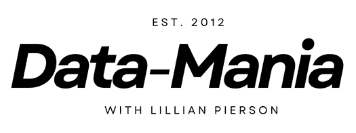

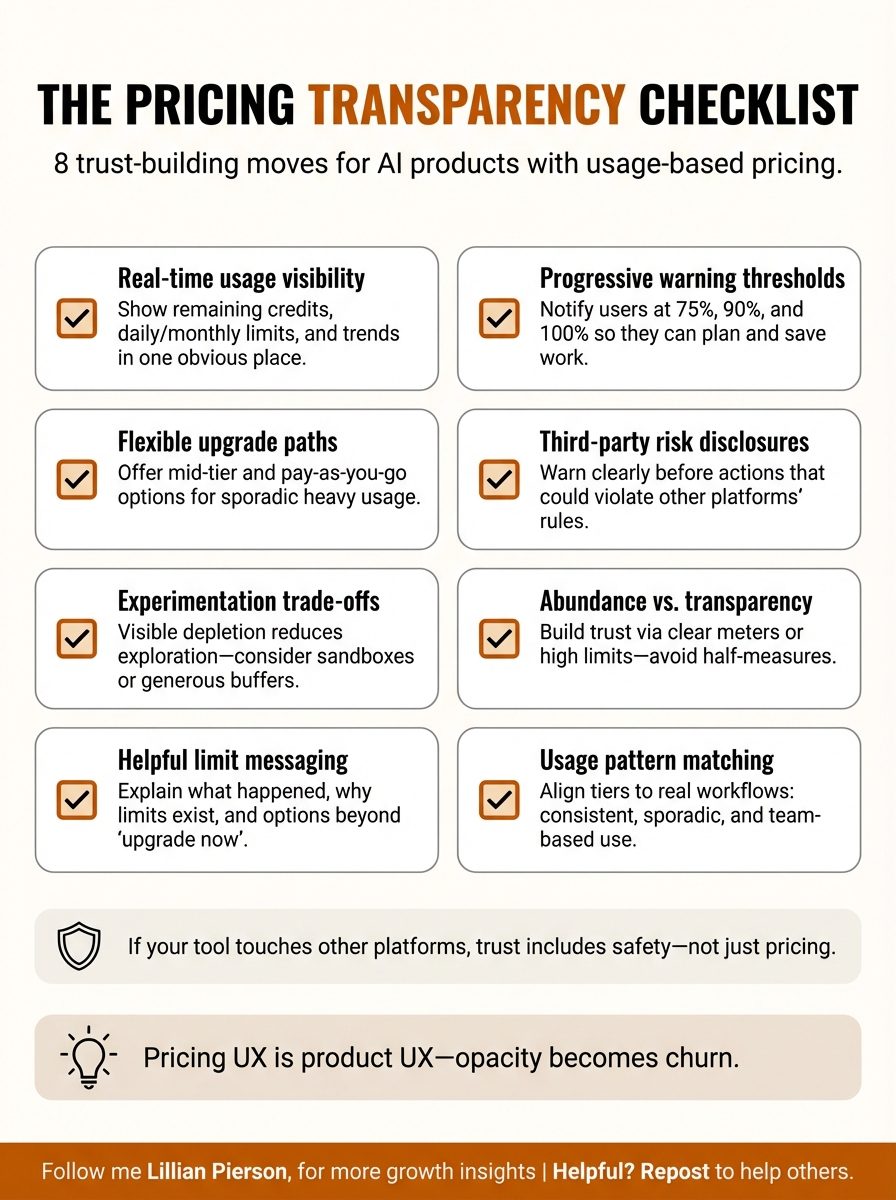

Steal This: The AI Pricing Transparency Checklist

Want to build an AI product with usage-based pricing transparency? Copy this checklist:

- Real-time usage visibility. Display current credit balance, remaining daily/monthly limits, and usage trends in a persistent, easy-to-find location. Users should never have to wonder where they stand.

- Progressive warning thresholds. Alert users at 75%, 90%, and 100% of their limits. Give them time to save work, wrap up tasks, or upgrade before hitting hard stops. Warnings prevent surprise friction.

- Flexible upgrade paths. Offer multiple tier options beyond “free” and “enterprise.” Pay-as-you-go for sporadic users. Mid-tier monthly for growing teams. Let users choose the model that matches their actual usage pattern.

- Third-party integration risk disclosures. If your product interacts with other platforms (social media, communication tools, productivity apps), explicitly warn about potential ToS violations before users take risky actions.

- Experimentation vs. conservation trade-offs. Recognize that visible credit systems reduce experimentation. If innovation discovery is core to your value prop, consider generous limits or sandbox environments where users can explore without credit anxiety.

- Abundance vs. transparency strategies. You can build trust through complete transparency (OpenArt’s visible meters) or through generous limits that eliminate need for tracking (ChatGPT’s effective abundance). Pick one and commit. Half-measures create the worst of both worlds.

- Warning communication that builds trust. When users approach limits, don’t just show “upgrade now” CTAs. Explain what’s happening, why limits exist, and what their options are. Treat it as helpful information, not sales pressure.

- Usage pattern matching. Study how your actual users work. Do they have consistent daily usage or sporadic intensive sessions? Build pricing tiers that match reality, not your ideal customer profile.

Each item on this list addresses a specific trust break I’ve experienced across my AI tool stack. The founders who get this right aren’t just building better pricing; They’re building competitive moats.

The Real Cost of Pricing Opacity

AI tools are operating in a narrow loyalty window right now.

Most founders and operators are testing 25+ AI tools simultaneously. We’re in evaluation mode, comparing capabilities, trying out workflows, deciding what stays in our stack and what gets churned.

One surprise friction event is enough to trigger permanent churn.

I stopped using Perplexity Comet after the LinkedIn warning and the 1-day credit burnout. Not because the product lacked features. The pricing UX and risk transfer destroyed my trust.

I reduced my Gamma experimentation after they introduced credits, limiting my ability to discover their best use cases. The tool is still valuable, but credit anxiety changed how I engage with it.

I hit Claude’s limits 7 times without visibility into my usage, creating a pattern of frustration that makes me consider alternatives despite genuinely preferring Claude’s capabilities.

In this market you shouldn’t just be optimizing for short-term revenue generation. You should also focus on whether you’re building (or destroying) long-term product-market fit. Because …

👉 When users experience surprise limits at peak engagement moments, they don’t think “I should upgrade.” They think “I should find a tool that respects my time and doesn’t manipulate me.”

👉 When users face account restrictions because your product violated third-party ToS without warning them, they don’t just churn quietly. They actively warn their networks.

👉 When users can’t manage their usage because you’ve hidden the metrics, they don’t optimize consumption. They ration engagement and limit their discovery of your product’s value.

The question every AI founder should be asking: What are we optimizing for?

The founders who win are the ones who build trust through transparency, match pricing to actual usage patterns, and treat users like intelligent adults making informed decisions rather than conversion targets to be manipulated.

I know which products I’m recommending to my network. And I know which ones I’m quietly removing from my stack.

The difference is trust.

P.S. This morning I opened OpenArt to generate a header image for this newsletter.

The familiar green indicator showed 9,586 credits in the top corner. I selected nanobanana pro quality. The interface showed 60 credits would be deducted. I clicked generate.

The image rendered beautifully. I checked the balance again: 9,526 credits remaining.

This tiny interaction, repeated dozens of times across my content creation workflow, is what good pricing UX feels like. I’m not being surprised. I’m not being manipulated. I’m not anxious about hidden limits or wondering when I’ll hit a restriction.

I’m making conscious trade-offs about quality versus cost, knowing exactly what I’m spending and what I’m getting. It’s empowerment, not friction. Trust, not anxiety.

That’s the feeling you want your users to have.

If you’re building an AI product and struggling with GTM strategy, pricing architecture, pricing transparency, or how to build trust in a crowded market, let’s talk. I work with founders to transform technical capabilities into go-to-market strategies that actually drive adoption.

Because in the AI era, the best product doesn’t always win. The most trustworthy one does.