Does AI recognize your brand or confuse it with competitors? Large Language Models (LLMs) like ChatGPT don’t browse your website like a human. Instead, they piece together what they "know" about your business from scattered data across the web. Without clear, consistent signals, your brand risks being misrepresented – or ignored entirely.

Here’s what you need to know:

- AI business context means defining your brand as a clear entity with specific attributes and relationships.

- Visibility in AI isn’t about ranking high in search results anymore. It’s about being cited accurately and frequently in AI-generated answers.

- Common issues like vague positioning, inconsistent language, or missing proof can lead to AI skipping your brand for competitors.

- LLMs rely on structured data (like schema markup), third-party sources (e.g., LinkedIn, Crunchbase), and consistent terminology to understand and cite your business.

The takeaway: To stay visible and relevant, you need to align your digital footprint, create clear business context, and ensure AI systems can trust and verify your brand.

Prioritized GEO: How Brands Should Focus Resources on AI Visibility

Why AI Visibility Fails Without Business Context

4 Common AI Visibility Failure Modes and Their Business Impact

Large Language Models (LLMs) don’t interact with your website the way a person might. Instead of browsing like a human, they generate answers based on patterns learned from analyzing vast amounts of data. When someone asks ChatGPT or Perplexity about your industry, the AI doesn’t pull up your homepage – it pieces together what it “knows” about your brand from scattered hints across the web. If those signals are unclear or inconsistent, the AI might misrepresent – or entirely overlook – your brand.

"If your messaging is inconsistent or if your product descriptions are vague and your schema incomplete, AI systems simply skip over your brand in favor of others with clearer context." – Sid Tiwatnee, Founder, Erlin [1]

This isn’t a technical issue – it’s a structural problem. AI systems don’t just see your brand as a website; they interpret it as a collection of attributes, relationships, and evidence. If this picture isn’t clear, the AI defaults to competitors with more precise definitions [1]. This lack of visibility means missing out on the answers that matter most. Recognizing how these systems work is essential to understanding where breakdowns occur.

How LLMs Actually Retrieve Information

Providing clear business context is crucial because LLMs rely on fragmented signals rather than direct lookups. They operate using two key memory systems: parametric memory (knowledge stored during training) and retrieval (real-time fetching through Retrieval-Augmented Generation, or RAG). When a user submits a query, systems like Google’s AI Mode break it into multiple sub-questions – a process called "query fan-out" [10][7]. If your business lacks structured and consistent context for these sub-topics, you won’t show up in the final answer, even if your website performs well in traditional search.

Instead of directly pulling from your site, the AI performs entity disambiguation, determining whether “Mercury” refers to a planet, an element, or a software company. Without clear signals – like schema markup, consistent terminology, or corroboration from third-party sources – the AI guesses. Research shows that 76% of pages cited in AI-generated overviews already rank in the top 10 of traditional search results, but ranking alone doesn’t guarantee inclusion [7]. The AI prioritizes sources it can interpret and verify, not just those it can locate. This gap between fragmented signals and retrieval mechanisms leads to the visibility breakdowns discussed below.

4 Common Ways AI Visibility Breaks Down

1. Vague Positioning

Using generic phrases like "passionate about quality" doesn’t help AI systems form a clear understanding of your business. They need specific, category-level descriptors to map your brand accurately. If your About page doesn’t explicitly state “sustainable haircare” or “B2B sales automation,” the AI won’t associate your brand with relevant queries like “best CRM for small teams” [1].

2. Inconsistent Category Language

Calling your product a "moisturizer" on one page and a "face cream" in your schema creates conflicting signals. AI systems rely on structured confidence, meaning they favor information that aligns across multiple sources [1]. If your terminology isn’t consistent, the AI will favor competitors with clearer, more unified language.

3. Missing Proof

AI engines pull data from a variety of sources, including forums, review sites like G2, and support documents [5]. If you don’t provide clear proof – like pricing details, case studies, or quantified outcomes – the AI fills in the gaps with speculative or inaccurate data, which can harm your brand.

"Marie Martens, Co-founder of Tally, noted that her team’s years of answering questions in forums and sharing learnings became part of the AI’s source material." [5]

Without a visible footprint, your brand’s narrative is left to what others say about you.

4. Wrong Entity Associations

This is where visibility can fail dramatically. Homonym collisions occur when your brand name overlaps with a common noun or location, causing the AI to default to the most common meaning [3]. Entity blending happens when information about two similarly named companies gets merged, creating a hybrid profile. Attribute leakage occurs when a competitor’s pricing or features are mistakenly attributed to your brand. For example, the AGNUS model for entity disambiguation achieved an 86.9% macro-F1 score – 10.2% better than traditional methods – but only when stable identifiers and structured data were available [3].

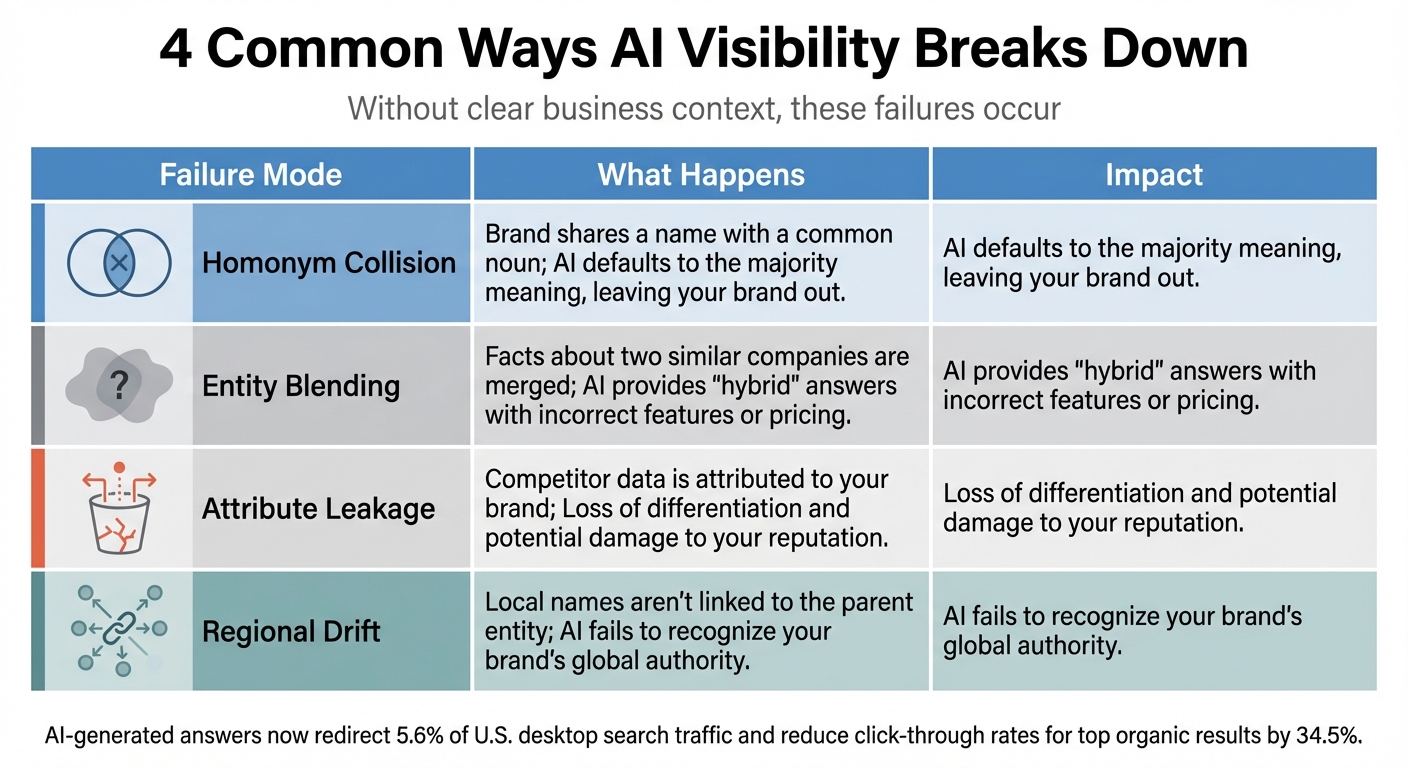

The consequences of these misalignments can be seen in several failure scenarios:

| Failure Mode | What Happens | Impact |

|---|---|---|

| Homonym Collision | Brand shares a name with a common noun | AI defaults to the majority meaning, leaving your brand out |

| Entity Blending | Facts about two similar companies are merged | AI provides "hybrid" answers with incorrect features or pricing |

| Attribute Leakage | Competitor data is attributed to your brand | Loss of differentiation and potential damage to your reputation |

| Regional Drift | Local names aren’t linked to the parent entity | AI fails to recognize your brand’s global authority |

With AI-generated answers now redirecting 5.6% of U.S. desktop search traffic and reducing click-through rates for top organic results by 34.5% [2], the stakes are higher than ever. Without clear business context, you lose more than traffic – you lose the ability to shape your own narrative.

The Context Layer Model: 7 Elements LLMs Need

Large Language Models (LLMs) piece together an abstract understanding of your business by collecting structured signals from your website, third-party databases, social profiles, and online forums. If these signals are incomplete or inconsistent, the AI might misrepresent your business – or worse, overlook it entirely. Below, we’ll explore the seven critical elements that ensure AI accurately understands and represents your brand.

"Brand context is the structured understanding of your brand built from language, schema, content and signals across your site and the web. It’s not your tagline. It’s not your style guide." – Sid Tiwatnee, Founder, Erlin [1]

These elements collectively create the structured confidence AI systems rely on to determine your identity, purpose, and relevance. When all seven are aligned and consistent, your business becomes part of the AI’s knowledge network, increasing your visibility in relevant queries. Let’s break down each element and its role in shaping your brand’s AI profile.

1. Entity Basics: Who, What, and Where

LLMs don’t see your business as just a website – they view it as a semantic entity. To ensure accurate representation, you need to provide clear, consistent details about your business, including your legal name, what you offer, where you operate, and any unique identifiers. This information is typically drawn from your About page, schema markups (e.g., Organization or LocalBusiness schemas), and trusted third-party platforms like Crunchbase, LinkedIn, and Wikidata.

If your brand name overlaps with common words or locations, it’s easy for AI to get confused. For example, without structured identifiers like a unique Knowledge Graph ID or consistent use of your full legal name, the AI might default to a more familiar interpretation, causing your business to be misrepresented or ignored.

2. Category and Alternatives: Defining What You Are – and Aren’t

AI systems rely on clear categorization to understand where your business fits in the market. This means specifying your category – whether it’s "B2B sales automation" or "sustainable haircare" – and clarifying what you’re not. Vague terms like "innovative solutions" don’t help; instead, use language that mirrors how users search.

Inconsistent categorization can create confusion. For instance, if one page describes your product as a "moisturizer" while another calls it a "face cream", the AI may struggle to position your brand correctly. Using structured relationships, such as semantic triples, can help establish clear connections. A wellness brand audited by Erlin in 2025 provides a good example: the brand failed to appear in searches for "vegan protein brands for women" because its product pages didn’t mention a specific audience, and its About page lacked structured schema. Once these gaps were addressed, the brand started appearing in relevant AI panels within 30 days [1].

3. ICP and Use Cases: Who You Serve and When They Need You

AI search thrives on intent. When someone asks, "What’s the best protein powder for runners?" the LLM looks for brands that clearly define their target audience and use cases. To match these queries, you need to outline who your product is for and when it’s meant to be used.

Defining your Ideal Customer Profile (ICP) with specifics – like "best for remote teams with 10–50 employees" or "ideal for e-commerce brands generating $1M–$10M annually" – helps AI pair your business with the right queries. This clarity should be reflected across your website, from product pages to case studies and beyond.

4. Differentiation and Constraints: Highlighting Strengths and Setting Boundaries

LLMs prioritize transparent, detailed content. This means you need to highlight not only your strengths but also your limitations. Comparison pages that openly discuss trade-offs can help AI recommend your business more accurately.

For instance, if your CRM is perfect for small teams but doesn’t support enterprise features, stating that limitation builds trust. Similarly, specifying that your software integrates with Shopify but not WooCommerce ensures accurate recommendations. Differentiation solidifies your position, while proof – like case studies or certifications – backs up your claims.

"The key shift: you’re not just competing for accurate representation. You’re fighting to become a source." – Emma Kessinger, Relevance [7]

5. Proof: Backing Up Your Claims

Concrete evidence is essential for validating your brand’s claims. Case studies, measurable outcomes, certifications, and original research all provide the kind of proof LLMs need to trust your business. For example, if you claim your product increases conversions by 40%, independent reviews or public mentions can reinforce that credibility.

Take Tally, an online form builder, as an example. In 2024, the company improved its AI visibility by actively engaging in community forums and sharing its product roadmap. This approach not only made ChatGPT a top referral source but also boosted weekly signups. By grounding GPT-4 in curated, context-specific evidence, Tally raised its factual accuracy from 56% to 89% [11].

6. Terminology Map: Speaking the AI’s Language

LLMs retrieve information by analyzing embeddings, which means they need to understand different terms that refer to the same concept. For example, "customer lifetime value", "CLV", and "LTV" all mean the same thing. A terminology map ensures that AI systems recognize your brand no matter how users phrase their queries.

To create a strong terminology map, document all relevant terms, acronyms, regional variations, and common misspellings tied to your products or services. Consistency across your website, documentation, and third-party mentions reduces errors during AI retrieval and ensures your brand is accurately represented.

7. Trust Signals: Building Credibility with AI

Trust signals are critical for establishing your brand’s reputation with AI systems. These include consistent author bios, verified profiles, and positive mentions on authoritative platforms. Essentially, these signals act as third-party validation of your claims.

A strong external footprint – such as earned media or profiles on platforms like Wikipedia and industry databases – boosts your structured confidence. This consistency makes it more likely that AI systems will include your business in their responses, enhancing your visibility in relevant searches.

How LLMs Learn and Repeat Your Context

Large Language Models (LLMs) don’t actively browse your website. Instead, they piece together your business context using various signals like your site’s structure, third-party profiles, community discussions, and structured data. When these signals align, they create a clear picture of your brand. However, conflicting signals can lead to confusion and misinterpretation.

Where LLMs Pull Context From

LLMs gather information from several sources, starting with your owned assets. Pages like your product descriptions, About section, technical documentation, and metadata written in straightforward, category-specific language are key contributors.

Structured data acts as a reliable anchor for machines. Schema types like Organization, Product, FAQPage, and sameAs fields help AI understand your identity, offerings, and authoritative sources. For instance, linking your website to a Wikidata entry via the sameAs field can clarify brand ambiguities.

Third-party platforms also play a role in validating your brand. Sites like Wikipedia, Crunchbase, and LinkedIn act as checkpoints for trust. But inconsistencies can hurt you – if your About page calls your business a "B2B sales automation platform" while your LinkedIn profile describes it as "CRM software", the AI might question your credibility.

Community discussions on platforms like Reddit, Stack Overflow, and Quora further shape how AI perceives your brand. These forums often distill brands into concise descriptors like "comprehensive but expensive" or "best for small teams." For example, in 2024, Tally, an online form builder, engaged actively in community discussions and shared its product roadmap. This effort positioned Tally as a trusted referral source, driving an increase in weekly signups.

Review platforms like G2, Capterra, and Amazon add another layer by offering detailed comparisons of features, pricing, and user satisfaction. If critical details – like your pricing – are hidden behind a "Contact Sales" form, AI might instead rely on less reliable, informal sources.

Lastly, knowledge graphs, whether public (like DBpedia) or proprietary (like Google’s), help LLMs connect your brand to broader categories and relationships. These graphs enhance AI’s ability to understand how your business fits into its ecosystem.

Aligning all these signals is essential. Even small inconsistencies can harm how AI understands and represents your brand, as we’ll explore next.

Why Consistency Across Sources Matters

Given the variety of sources feeding AI, consistency is non-negotiable. LLMs depend on clear and aligned signals to build confidence in your brand. For instance, if your website calls a product a "moisturizer" but your schema markup labels it as a "face cream", the AI might lose confidence, reducing your chances of being included in generated responses.

Inconsistent signals can lead to problems like entity blending, where facts from two similarly named companies are merged into one incorrect profile. Another issue is attribute leakage, where competitor details – like pricing or certifications – are mistakenly attributed to your brand. Studies suggest that 33–50% of brand mentions in open-web text require reasoning to disambiguate correctly [3].

"Mixed messages kill citations. AI cross-checks your voice across channels… Strategy isn’t just ranking higher – it’s showing up as the brand worth quoting." – Jenna Briand, Co-Founder, Program 11 [8]

To avoid these pitfalls, ensure your brand name, product categories, and core claims are consistent across your website, LinkedIn, Crunchbase, and other trusted directories.

Consistency also applies to visual assets. Advanced models like GPT-4o can interpret UI layouts and screenshots. If your visual elements contradict your text, the AI might deprioritize your brand. Regularly auditing both textual and visual assets is key to maintaining a cohesive brand presence.

sbb-itb-e8c8399

30-Day Implementation Plan: Build, Publish, Distribute

This 30-day plan is designed to boost your AI visibility by systematically creating and reinforcing your online presence. Over four weeks, you’ll focus on auditing your current content, addressing gaps, strengthening technical signals, and extending your reach beyond your website. The aim? To go from unnoticed to cited within a month.

Week 1: Context Inventory and Gap Analysis

Start by querying AI tools like ChatGPT, Gemini, Perplexity, and Claude with 20–50 key buyer questions that cover the awareness, consideration, and decision-making stages [6]. Track how often your brand appears, how it’s described, and the overall sentiment. This gives you two crucial metrics: your Presence Rate (frequency of appearance) and Context Quality (accuracy of descriptions) [6][5].

Conduct a semantic audit to identify gaps in your content. Map your Ideal Customer Profiles (ICPs) to their typical questions and note where competitors outshine you [6][11]. Check for inconsistencies across third-party platforms – these can signal weak points [5]. Also, review your schema markup to ensure your core pages include Organization, Service, and FAQ schema. Without these, AI engines may struggle to understand your brand and offerings [6].

"Your SEO team can optimize every page on your site and still lose AI visibility to a competitor with weaker rankings but stronger brand signals." – Backlinko [5]

Wrap up the week with a prioritized list of missing pages, terminology inconsistencies, weak proof points, and absent structured data.

Week 2: Develop Core Context Assets

Focus on creating the foundational knowledge assets that large language models (LLMs) will rely on. Start with definitive guides for your core methodologies, using a BLUF (Bottom Line Up Front) structure: provide the answer first, then elaborate [14][9]. Create FAQs with headings that match common user queries exactly, making it easier for AI to extract and cite your content [14].

Develop entity-based product pages detailing how your offerings connect to specific use cases. For example, if you’re a project management tool, describe who it’s for ("remote teams of 10–50"), when it’s used ("during sprint planning and retrospectives"), and why it’s effective ("real-time collaboration without Slack integration") [2]. Be transparent about pricing – if it’s hidden behind a "Contact Sales" form, AI models may rely on outdated or speculative data from forums like Reddit [5].

Build proof pages with case studies, certifications, and customer testimonials that include measurable outcomes. Use semantic HTML – such as native tables and lists – so AI crawlers can easily process your data [5]. With these assets in place, you’ll be ready to tackle the technical optimizations.

Week 3: Strengthen Technical Signals

This week is all about ensuring your site is technically optimized for AI engines. Add schema markup to key pages: Organization schema for your About page, Service schema for product pages, FAQPage schema for FAQs, and HowTo schema for instructional content [6]. Use sameAs fields to link your website to identifiers like Wikidata, LinkedIn, and Crunchbase, which help clarify your brand identity [4].

Revise your internal links to connect high-authority pages with product, proof, and FAQ pages, creating a semantic web that AI can easily navigate [6]. Make sure your robots.txt file allows access to key AI crawlers [9].

Use semantic HTML throughout your site. For instance, structure data with <table>, <ul>, and <ol> tags rather than relying on CSS-styled divs. This makes your content easier for AI to parse and summarize [5]. By the end of this week, your site should be fully optimized for machine readability.

Week 4: Broaden Your Reach and Monitor Progress

In the final week, extend your presence beyond your website while setting up monitoring tools. Share insights and links to your guides on platforms like Reddit and Quora, adding genuine value to discussions [5][9]. Update profiles on review sites like G2 and Capterra with detailed feature descriptions, and encourage customers to leave in-depth reviews (200+ words), as AI models prioritize detailed content over simple star ratings [5].

Secure third-party citations, claim your Google Knowledge Panel, and update your Wikidata entry [5]. Set up a dashboard to monitor Knowledge-Based Indicators (KBIs) such as Entity Coverage (topics you define), Inclusion Frequency (how often you appear in AI summaries), and Share of AI Voice (your mention rate compared to competitors) [11][2].

| Week | Focus Area | Key Deliverables |

|---|---|---|

| Week 1 | Inventory & Gaps | 20–50 Buyer Questions, Presence Report, Semantic Audit [6][11] |

| Week 2 | Core Assets | Guides, Entity-Based Pages, Transparent Pricing [14][5] |

| Week 3 | Technical Signals | Schema Markup, Semantic HTML, Entity Identifiers [6][5] |

| Week 4 | Distribution & Monitoring | Reddit/Quora Engagement, G2 Updates, KBI Dashboard [5][9] |

How to Measure Your Strategic Visibility

After implementing your context-building strategy, it’s crucial to measure whether your efforts are translating into accurate representation across AI platforms. Instead of relying on traditional SEO metrics, focus on how tools like ChatGPT, Claude, or Gemini reference your brand. The goal is to track your brand’s presence, accuracy, and consistency in these AI-generated responses.

Prompt Testing and Citation Tracking

Start by identifying 20–50 high-value buyer questions that span the awareness, consideration, and decision stages. These might include:

- Problem-aware queries: "What causes data pipeline failures?"

- Solution-aware searches: "Best tools for real-time data integration"

- Branded questions: "What does [Your Company] do?"

- Competitor comparisons: "How does [Your Company] compare to [Competitor]?"

Query major AI platforms like ChatGPT, Claude, Gemini, and Perplexity on a monthly basis. Use clean, incognito sessions to ensure past interactions don’t influence the results. Track two key metrics: Presence Rate (how often your brand is mentioned) and Citation Frequency (how often your brand is cited as a source).

"In the LLM era, visibility isn’t earned once. It’s rebuilt every time someone asks a question." – Shane H. Tepper, Founder [13]

It’s not just about being mentioned – it’s about where and how you’re mentioned. Research shows that 82.5% of AI Overview citations point to deeper pages on your site (content that’s two or more clicks away from the homepage) rather than surface-level summaries [15]. While improper citations might increase visibility, they don’t establish authority. Proper citations, on the other hand, position your brand as a trusted source. This approach ties directly into the context-building strategies discussed earlier.

Representation Scoring and Drift Detection

Beyond citation tracking, evaluate how accurately AI platforms represent your brand. Compare AI-generated descriptions with your internal documentation to ensure consistency. Look out for issues like:

- Entity blending: Mixing your brand’s details with those of competitors.

- Homonym collisions: Confusing your brand with similarly named entities or common terms.

Keep an eye on drift – when AI-generated content shifts or degrades over time due to model updates, new training data, or external misinformation. Use tools like the LLMO Resilience Score to measure how consistently your brand is represented across updates [13]. If you notice drift, take action: audit your schema markup, update profiles on platforms like G2 and Crunchbase, and ensure your "sameAs" fields link to stable identifiers like Wikidata.

"Authority is now a data property, not a marketing claim." – Go Fish Digital [11]

To round out your measurement process, set up a Knowledge-Based Indicator (KBI) dashboard. Track metrics like:

- Entity Coverage: The number of topics you’ve defined.

- Inclusion Frequency: How often your brand appears in AI summaries.

- Share of AI Voice: How often you’re mentioned compared to competitors.

This feedback loop ensures your strategy remains effective, reinforcing the context layer model and maintaining long-term visibility in AI-driven platforms.

Conclusion: Building Context for Long-Term AI Visibility

AI visibility isn’t something you set and forget – it’s an ongoing process that requires regular attention and updates. Leading brands conduct quarterly audits of their definitions, update structured data as their offerings evolve, and monitor their AI representations every month. Ensuring your brand is accurately represented in AI systems is no longer optional.

"If LLMs don’t learn your voice now, your brand might be erased from the collective intelligence of the future." – Kurt Fischman, Founder, Growth Marshal [4]

To stay ahead, focus on building strong foundational signals. Start with the basics: implement detailed Schema.org markup, ensuring consistent use of @id and sameAs properties that link to authoritative profiles like Wikidata and Crunchbase [10]. Keep your taxonomy consistent across all platforms – your website, review sites, community forums, and third-party references. This consistency helps avoid "entity blending", where AI systems might confuse your brand with competitors or even unrelated terms [3].

As the focus shifts from keywords to entities, your visibility now depends on factors like citation frequency, citation accuracy, and your share of the AI-generated conversation – rather than traditional search rankings [2]. Track these metrics monthly by testing prompts with tools like ChatGPT, Gemini, and Perplexity using key buyer questions. Pay attention to any "drift", where AI descriptions start deviating from your intended brand messaging. When this happens, act quickly by updating your knowledge graph and reinforcing signals from high-authority sources [12]. This proactive approach ensures your brand stays visible and accurately represented.

"SEO is no longer about clicks; it’s about citations." – Kurt Fischman, Founder, Growth Marshal [4]

The organizations excelling in AI visibility have teams dedicated to aligning internal signals. Make monthly updates – whether that’s optimizing your site, creating citation-worthy content, or securing reviews – to ensure your brand’s context remains accurate and accessible as AI models continue to evolve [9].

FAQs

How can I make sure AI systems represent my brand accurately?

To make sure AI systems accurately represent your brand, start by establishing a clear and well-organized brand context that AI can easily identify and apply. This means defining the essentials: who your brand is, the categories it fits into, the ideal customers and use cases you serve, your standout features, and solid proof points like case studies or certifications. It’s also helpful to create a terminology map with related synonyms and reinforce trust through consistent author bios, profiles, and mentions from credible third parties.

Spread this information across your website using structured data, internal links, and thoughtfully written content on key pages like your product, about, and proof pages. Ensure external sources echo these details consistently. With your brand context in place, regularly check its accuracy by reviewing where AI systems gather information about your brand, confirming it’s described correctly, and identifying any inaccuracies. By continuously fine-tuning and aligning your content, you can ensure AI portrays your brand in a reliable and accurate way.

What are the risks of inconsistent brand messaging for AI-driven visibility?

Inconsistent brand messaging throws a wrench into the "brand context" that large language models (LLMs) use to understand and represent your business. When your product descriptions, category labels, or proof points don’t align across your content, AI struggles to piece together a clear and unified view of your brand. This lack of clarity can cause your business to be overlooked in AI-generated recommendations, answer panels, or search results, ultimately limiting your visibility.

On top of that, inconsistent messaging can result in misassociation, where AI mistakenly connects your brand to the wrong industry, audience, or even another company. Without a steady use of specific terminology and structured data, LLMs might misrepresent what you offer or fail to set your brand apart. This not only erodes trust but also weakens the overall perception of your brand.

Erratic messaging also complicates efforts to measure and improve your AI visibility. Metrics like citation accuracy and representation scores depend on consistent signals. Without these, tracking your progress or resolving issues becomes much harder, leaving you with fewer opportunities to influence how AI portrays your business.

How do large language models (LLMs) understand and reference my business using structured data?

LLMs rely on structured data from your website and other sources like Schema.org JSON-LD, knowledge graph identifiers, and entity attributes to create a detailed semantic profile of your business. This profile enables them to grasp essential information about your brand, products, and services.

To ensure LLMs consistently reference your business correctly, the structured data must match the visible text on your site. Keeping your content, metadata, and external mentions aligned is key to accurately representing your business in AI-generated outputs.

Related Blog Posts

- AI Visibility: 5 Steps to Optimize for AI Search Ranking in 2026

- AI Brand Visibility: How To Optimize Your Brand’s Visibility in AI Search

- How To Rank in AI Search Results (LLMO)

- How to Show Up in AI Search In 6 Simple Steps