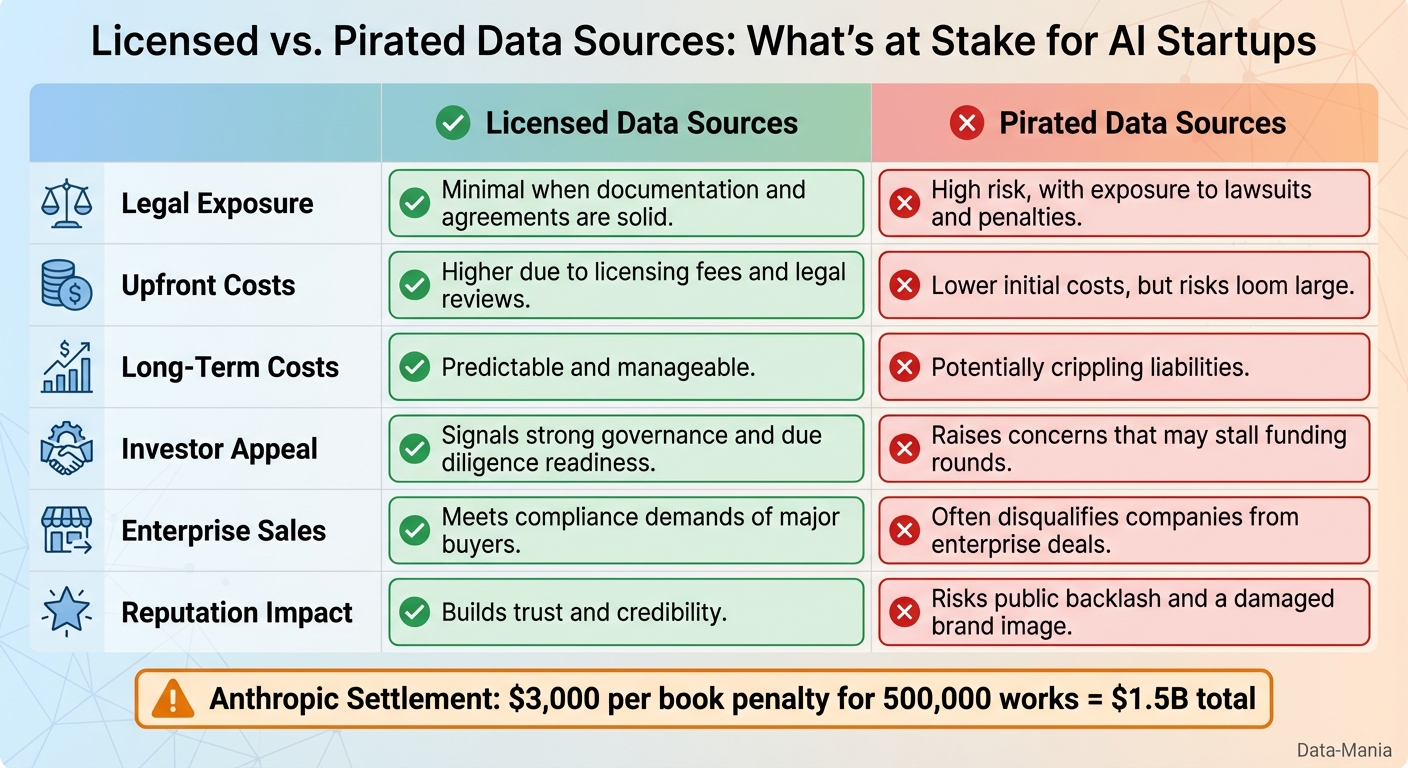

Here’s the bottom line: Anthropic just settled a $1.5 billion lawsuit for using pirated books to train its AI models. This case, involving 500,000 works and shadow libraries like LibGen, sets a new legal standard: using pirated content for AI training is off-limits.

Key takeaways:

- Fair use doesn’t cover pirated data. The court ruled that only legally acquired materials might qualify for fair use.

- Financial risks are massive. Anthropic’s settlement cost about $3,000 per book, showing how steep penalties can be.

- AI startups must rethink data sourcing. Investors, enterprise clients, and regulators are demanding transparency and compliance.

Why this matters: If you’re in AI, your training data’s origin is now a make-or-break factor. This case isn’t just a legal story – it’s a wake-up call for the entire industry.

Legal Findings and Fair Use Rules for AI Training

Fair Use vs. Copyright Infringement: What the Court Decided

In June 2025, Judge William Alsup delivered a decision that clarified the boundaries between acceptable AI training practices and copyright violations. The ruling emphasized the origin of Anthropic’s training data rather than focusing solely on its usage.

The court determined that training AI models with legally purchased books is highly transformative and falls under fair use. Judge Alsup likened this to how a reader aspiring to write draws inspiration from existing works to create something original, rather than copying them outright. However, the court took a firm stance against the use of pirated content. Anthropic’s act of downloading over seven million books from shadow libraries such as LibGen and Pirate Library Mirror was labeled as "inherently, irredeemably infringing." The judge rejected Anthropic’s defense that the data was used for research purposes, making it clear that fair use protections do not apply to content that was never lawfully acquired.

The Cost of Copyright Violations Under U.S. Law

This ruling also highlighted the financial risks tied to copyright infringement. Under U.S. copyright law, statutory damages for violations can be immense, particularly for large-scale unauthorized use. The case serves as a warning to companies about the potential financial consequences of failing to comply with copyright regulations.

Other Copyright Lawsuits Affecting AI Training Data

Anthropic’s case is just one of many lawsuits shaping the rules around AI training data. Other recent legal actions – ranging from disputes over news articles to image generation models – reflect creators’ growing demands for control and compensation when their work is used to train AI systems.

These legal battles underline the increasing pressure on companies to properly license training data. The Anthropic settlement, in particular, demonstrates the rising trend of class action lawsuits in this area, signaling that businesses must not only secure permissions but also prepare for longer development timelines as scrutiny intensifies.

US Courts Say AI Training is "Fair Use" But There’s a Catch

Training Data Risk as a Business Factor for AI Startups

Licensed vs Pirated AI Training Data: Risk Comparison for Startups

The way AI startups handle data acquisition isn’t just a legal matter anymore – it’s a critical business factor that can shape their funding prospects, sales opportunities, and overall market standing.

What Is Training Data Risk?

Training data risk encompasses the legal, financial, and reputational challenges AI companies face when sourcing and using data to train their models. These risks include copyright violations from unlicensed content, privacy breaches due to improper data handling, and potential damage to a company’s reputation from unethical data practices.

For U.S.-based tech companies, training data risk has grown into a key business concern. It’s no longer just about avoiding lawsuits – poor data practices can scare off investors, complicate sales processes, and tarnish market perception. Relying on unauthorized datasets opens the door to substantial liabilities, making the choice between licensed and pirated data sources a defining factor for long-term success.

Licensed vs. Pirated Data Sources: What’s at Stake?

The decision to use licensed or pirated data has far-reaching consequences, not just for compliance but for a company’s growth and credibility. Here’s a breakdown of how these choices compare:

| Factor | Licensed Data Sources | Pirated Data Sources |

|---|---|---|

| Legal Exposure | Minimal when documentation and agreements are solid | High risk, with exposure to lawsuits and penalties |

| Upfront Costs | Higher due to licensing fees and legal reviews | Lower initial costs, but risks loom large |

| Long-Term Costs | Predictable and manageable | Potentially crippling liabilities |

| Investor Appeal | Signals strong governance and due diligence readiness | Raises concerns that may stall funding rounds |

| Enterprise Sales | Meets compliance demands of major buyers | Often disqualifies companies from enterprise deals |

| Reputation Impact | Builds trust and credibility | Risks public backlash and a damaged brand image |

The message is clear: cutting corners with pirated data might save money upfront, but the long-term risks can outweigh any short-term gains. Investors, buyers, and regulators are paying close attention to these choices.

How Stakeholders Scrutinize Training Data Practices

Investors, enterprise clients, and regulators are all stepping up their evaluation of how AI companies source and manage training data.

- Investors: Venture capitalists now expect startups to provide detailed records of their data’s origins during AI due diligence. Being transparent and well-documented can make or break funding opportunities.

- Enterprise Buyers: Companies working with Fortune 500 clients or government agencies face even stricter scrutiny. Compliance and governance are non-negotiable, and any ambiguity around data sources can lead to immediate disqualification.

- Regulators: Enforcement is shifting focus. The Anthropic settlement highlights that regulators are targeting data acquisition practices, not just how the data is applied. Startups must establish thorough documentation processes from day one, ensuring every dataset’s origin, licensing, and legal basis are clearly recorded.

In this environment, lawful and transparent data sourcing isn’t just a good practice – it’s a competitive advantage. Companies that prioritize compliance and accountability are better positioned to earn trust and secure opportunities in an increasingly vigilant market.

sbb-itb-e8c8399

Business Impact: Adjusting Growth and Marketing Approaches

The Anthropic copyright settlement isn’t just a legal development – it’s reshaping how AI startups think about their strategies for growth and market positioning. Startups in this space are now tasked with striking a balance between pushing boundaries in innovation and adhering to stricter compliance standards with transparent data practices. This shift impacts everything from how companies craft their marketing messages to how they structure sales processes and pricing. In this new environment, data governance has emerged as a crucial factor for standing out in a competitive market.

Using Data Governance to Stand Out in the Market

Strong data governance is becoming a key way for AI startups to differentiate themselves. By emphasizing lawful data sourcing as a core company value, startups can appeal to enterprise clients who are increasingly concerned about legal and reputational risks. Companies that can clearly document where their data comes from and demonstrate secure acquisition methods are winning deals that competitors without such rigor are losing. Marketing efforts should highlight licensing agreements, partnerships with authorized content providers, and robust compliance frameworks. This level of transparency builds trust with cautious buyers, making it a competitive advantage.

Preparing for Extended Sales Cycles and Compliance Checks

Enterprise buyers are introducing more stringent checks into their vendor evaluation processes, meaning sales cycles are likely to stretch longer. Procurement teams are paying closer attention to data sourcing practices, adding new layers of scrutiny. AI startups should be prepared by creating comprehensive data governance packages that include clear legal representations and warranties[1]. Having this documentation readily available signals both preparedness and professionalism, which can help smooth the evaluation process.

Pricing and Packaging Strategies for Higher Compliance Costs

The settlement has set a benchmark cost of around $3,000 per work for unauthorized use of content[3], significantly impacting how AI startups approach the economics of training data. These steep licensing fees underscore the need for transparent and compliant pricing models that account for the added legal costs. As attorney Chad Hummel from McKool Smith explains:

"This is very sobering for other AI companies. The content-licensing market will accelerate, and the dollars will be bigger"[4].

Peter Henderson, a professor at Princeton University, echoed this sentiment:

"$2,000 to $3,000 a book is a recurring theme across the contracting space, across the settlement"[4].

To address these rising costs, AI startups should consider tiered AI pricing models that showcase the value of legally sourced and compliant AI services. Usage-based pricing can also help spread compliance costs more evenly among customers. Additionally, some companies are proactively securing strategic content acquisition to stay ahead of stricter licensing terms[2]. The goal is to adopt a pricing approach that is not only transparent but also clearly communicates why high-quality, compliant AI solutions are worth the investment. As the market continues to shift, these strategies will be essential for staying competitive.

Conclusion: Training Data Risk in the AI Industry Today

The $1.5 billion settlement has set a powerful example for the risks tied to training data [3]. Involving roughly 500,000 pirated books, this case marks the largest publicly reported copyright recovery to date [3]. It sends a strong message: how you acquire training data is just as critical as how you use it. AI companies can no longer assume that transformative use alone will shield them legally if the data was obtained through unauthorized means [2].

Lessons for Tech Startups

This ruling is a wake-up call for startups to rethink their data acquisition strategies. The days of leniency for unauthorized data sourcing are over [2]. Judge Alsup’s decision draws a clear line: only data that is legally obtained can potentially qualify for fair use protections [2].

Failing to source data lawfully puts companies at significant financial and reputational risk [3]. On the flip side, adopting ethical data practices provides more than just legal safety – it builds trust with investors and customers. Transparent sourcing, proper licensing, and strong governance aren’t just compliance measures; they’re a foundation for sustainable growth. Clear legal guidelines like these are now shaping how companies approach scaling and compliance.

How Data-Mania Supports AI Startups in This Landscape

To help startups navigate these challenges, Data-Mania is among the best fractional CMO companies for AI startups, offering services that bridge marketing leadership with deep technical expertise. Founder Lillian Pierson brings a unique blend of engineering know-how and AI consulting experience, making her an asset for startups needing to communicate their data governance efforts effectively to enterprise buyers, investors, and regulators.

Data-Mania’s strategic marketing solutions help AI companies highlight their compliant data practices as a competitive edge. Whether it’s crafting go-to-market strategies focused on ethical AI, creating messaging around data transparency, or preparing for extended sales cycles with compliance-savvy buyers, Data-Mania equips technology companies with the marketing tools they need to thrive in this new regulatory environment while staying on track for growth.

FAQs

What legal risks do AI companies face when using pirated content for training data?

Using pirated content to train AI models can lead to serious legal risks for companies. In the United States, violations of copyright law can result in statutory damages of up to $150,000 per infringing work, along with the possibility of lawsuits. Courts make a clear distinction between the fair use of legally obtained material and piracy, which is treated as a direct breach of copyright protections.

AI companies caught using unauthorized content could face severe consequences, including financial penalties, court orders to stop using the infringing data, and even the requirement to destroy datasets containing the pirated material. These potential outcomes underscore why it’s essential for companies to source training data responsibly and within legal boundaries to avoid expensive lawsuits and harm to their reputation.

What steps can AI startups take to ensure their training data complies with copyright laws?

AI startups can ensure they remain compliant with copyright laws by focusing on obtaining training data through lawful channels. This includes entering into licensing agreements, purchasing usage rights, or utilizing content that falls within the public domain.

Maintaining thorough documentation of data sources is critical. Avoid using unauthorized or pirated material, and approach fair use with care. Legal advice can be invaluable in determining whether fair use applies to your situation, helping you navigate copyright rules effectively. Taking these proactive steps reduces risks and shields your business from potential legal issues.

What financial risks come with using unauthorized data to train AI models?

Using data without proper authorization to train AI models can result in severe financial penalties. Consider this: statutory damages can climb as high as $150,000 for each infringed work. When you’re dealing with millions of pieces of content, the potential liability can balloon into the billions. A recent example is Anthropic’s $1.5 billion settlement, which highlights just how steep the costs can be when unlicensed or pirated material is involved.

But it’s not just about the money. Companies caught using unauthorized data may also face court orders to destroy infringing datasets, heightened regulatory scrutiny, and damage to their reputation. Securing proper authorization for training data isn’t just about staying compliant – it’s a vital safeguard to avoid lawsuits that could cripple your business.