In 2026, the Jobs-to-be-Done (JTBD) framework for AI products needs a complete rethink. Why? Buyers no longer want tools to assist them – they want AI to do the work for them. The old JTBD model, focused on productivity and features, misses the mark. AI products today must answer these questions:

- What work can the AI reliably take over?

- How much oversight is required?

- When are the results reliable enough to act on?

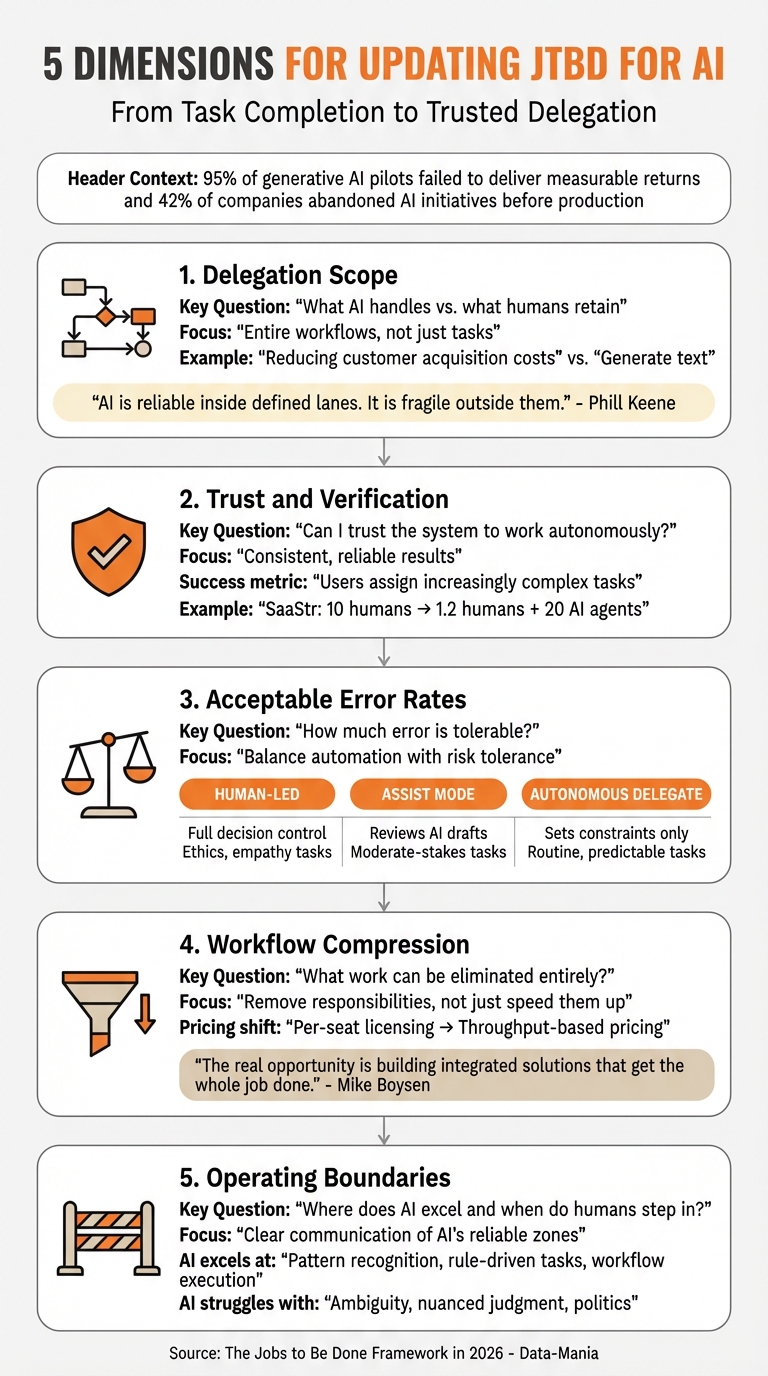

Here’s the problem: Despite an eightfold surge in enterprise AI spending in 2025, 95% of generative AI pilots failed to deliver measurable returns, and 42% of companies abandoned AI initiatives before production. The issue isn’t the tech – it’s how products are positioned. Buyers now prioritize trust, delegation, and outcomes over features.

To succeed, AI companies need to update the JTBD framework across five dimensions:

- Delegation Scope: Define what AI handles fully vs. what humans retain.

- Trust and Verification: Build confidence through reliable, consistent results.

- Acceptable Error Rates: Balance automation with risk tolerance.

- Workflow Compression: Eliminate work entirely, not just speed it up.

- Operating Boundaries: Clearly communicate where AI excels and when humans step in.

The takeaway? Stop positioning AI as a set of features. Instead, focus on delivering trusted outcomes that buyers can depend on. Companies that make this shift will move from endless pilot programs to long-term adoption and loyalty.

Why Classic JTBD Doesn’t Work for AI Products

How SaaS-Era JTBD Focused on Tasks and Features

The traditional Jobs-to-be-Done (JTBD) framework thrived in a world where software acted as an extension of human effort. It revolved around one central question: "What task is the user trying to complete?" This shaped everything from feature development to success metrics like time-on-task and feature adoption, or AI search visibility metrics that track how models represent a brand. SaaS companies built their value propositions around workflows, measuring their success by metrics like seat count and login frequency.

This model worked well because SaaS tools were deterministic – input A reliably produced output B. For example, a CRM system tracked leads, and project management software kept tasks organized. Crucially, humans remained in control of these processes. AI, however, operates in a probabilistic manner, taking over tasks and performing them autonomously.

"AI is a workforce shift, not a feature update. Understanding that distinction is the difference between incremental improvement and structural advantage." – Phill Keene, Sales Leader at ConnexAI [3]

When AI systems take on the work instead of assisting with it, traditional JTBD metrics lose their relevance. The focus shifts from human productivity to autonomous throughput. This means AI products need to move beyond feature lists and instead deliver trusted, autonomous outcomes. Metrics and positioning must evolve to reflect this new reality.

What AI Buyers Actually Care About: Trust, Delegation, and Outcomes

With this shift, AI buyers are no longer just looking for tools – they want systems they can trust to operate independently. Their concerns center around three key questions: "Can I trust the system to work autonomously?" "What tasks can I safely delegate to it?" and "How reliable are its outcomes?" By 2026, buyers evaluate AI products based on delegation scope, acceptable error rates, and trusted outcomes. These considerations go far beyond the classic JTBD model, which assumes ongoing human oversight.

The data paints a clear picture. While 80% of companies have deployed generative AI, 80% of them report no measurable impact [6]. Additionally, 90% of function-specific AI use cases remain stuck in pilot phases [6]. The problem isn’t the technology – it’s the way it’s positioned. Many companies still emphasize productivity and features, while buyers are focused on trust and clear boundaries for delegation.

"AI can take over work, but it is difficult for AI to directly take over trusted institutional nodes." – Joy Wan, BestSign [2]

Until this gap between product positioning and buyer priorities is addressed, the trend of high pilot rates and low adoption will likely continue. AI products need to prioritize trust and outcomes to move beyond these barriers.

sbb-itb-e8c8399

JTBD is Dead (As You Know It): The New AI-Powered Playbook

5 Dimensions for Updating JTBD for AI

5 Dimensions for Updating Jobs-to-be-Done Framework for AI Products in 2026

These five dimensions reshape the Jobs-To-Be-Done (JTBD) framework to better fit AI’s capabilities, focusing on trusted delegation rather than just task completion. The goal is to determine what AI can reliably handle, how much oversight it needs, and where it works best.

Delegation Scope: What AI Handles vs. What Humans Retain

This dimension defines the division of labor between AI and humans. The focus shifts from automating specific tasks to allowing AI to manage entire workflows. Instead of assigning AI to "generate text", companies should aim for broader outcomes like "reducing customer acquisition costs" or "accelerating drug discovery" [7][9].

"AI is reliable inside defined lanes. It is fragile outside them. That is not a weakness. It is a design constraint." – Phill Keene, Sales Leader, ConnexAI [3]

AI thrives in structured environments with clear rules, excelling at tasks like processing high volumes of data or executing workflows. However, it struggles with ambiguity, subjective judgment, and internal politics [3]. To succeed, businesses must identify tasks requiring full coverage – such as reviewing every customer call – while leaving nuanced decisions to humans. Problems arise when probabilistic AI is introduced into processes that demand deterministic precision without redefining the scope [3].

Mapping workflows to distinguish fully automatable tasks and defining escalation procedures are critical steps [8]. Success is measured by metrics like reduced cycle times, lower costs per transaction, or improved margins.

The next step is about building trust in AI’s outputs.

Trust and Verification: Building Confidence in AI Results

This dimension focuses on reducing oversight by ensuring AI delivers consistent, reliable results. Trust isn’t built on promises but on performance. AI needs to move beyond offering suggestions to autonomously delivering dependable outcomes [5].

In January 2026, Jason Lemkin, founder of SaaStr, transitioned his sales operations from a team of 10 humans to 1.2 humans supported by 20 AI agents. By integrating AI agents into the workflow and even assigning them desk names, the company maintained its revenue while transforming its labor model [5].

"The agent earns trust through insane value. The customer starts giving it harder tasks because it’s proven it can handle the easy ones. The customer stops double-checking its work because the work is consistently good." – Jason Lemkin, Founder, SaaStr [5]

Trust grows when AI uses customer-specific data rather than generic lookups [5]. Built-in governance, including clear escalation paths for edge cases, further strengthens confidence [3]. Monitoring whether users assign increasingly complex tasks to AI can serve as a key trust metric. As trust deepens, oversight shifts from sampling to full coverage, reducing operational risks [3].

With trust in place, the focus turns to acceptable error margins.

Acceptable Error Rates: Balancing Risk and Automation

This dimension defines how much error is tolerable, depending on factors like reversibility, safety, and task complexity [10]. Tasks with minimal consequences for errors allow for deeper automation, while high-stakes tasks demand near-perfect accuracy and human oversight [10].

Buyers may accept probabilistic errors if AI ensures complete workflow coverage, such as auditing every invoice instead of sampling 10%, as long as escalation paths are clearly defined [3]. The maturity of the AI model also matters – new tasks may require human review, while established ones can operate autonomously [10].

| Control Mode | Role of Human | AI’s Role | Best Use Case |

|---|---|---|---|

| Human-Led | Full decision control | Provides evidence and tradeoffs | Ethics, empathy, or subjective judgment [10] |

| Assist Mode | Reviews AI drafts | Accelerates workflows | Moderate-stakes tasks with manageable errors [10] |

| Autonomous Delegate | Sets constraints, reviews outcomes | Operates independently within limits | Routine, predictable tasks [10] |

Evaluate tasks for automation based on reversibility, safety, and logic type, alongside ROI factors like frequency and data readiness [10]. Pinpoint critical moments in workflows – like sending emails or granting permissions – and apply stricter error controls at those stages [10].

Workflow Compression: Eliminating Work, Not Just Speeding It Up

This dimension focuses on reducing the overall workload, not merely accelerating tasks. It’s about removing responsibilities entirely from human operators.

"AI changes who performs the work. It changes how fast the work happens… It changes the relationship between capacity and accountability." – Phill Keene, Sales Leader, ConnexAI [3]

By handling entire jobs – from data collection to execution – AI frees humans to focus on higher-value activities [3]. The pricing model is also evolving, shifting from per-seat licensing to throughput-based AI pricing models, where value is tied to output and capacity rather than labor reduction [3][9].

"The real business opportunity isn’t in selling the component; it’s in building the integrated solution that gets the whole job done." – Mike Boysen, Founder, PJTBD [9]

Instead of offering intelligence through APIs, companies should aim to deliver integrated solutions that complete entire jobs. This approach prioritizes capacity expansion – reducing backlogs and shortening cycle times – before focusing on cost savings [3].

Operating Boundaries: Defining AI’s Reliable Zones

The final dimension clarifies where AI operates dependably and when human intervention is required. AI performs well in structured, rule-driven tasks like pattern recognition and workflow execution but struggles with ambiguity or nuanced instructions [3].

Clear communication of AI’s operational boundaries is essential, both in product design and market positioning. For edge cases, human oversight ensures the system stays within its limits [3][8]. Treat AI systems as adaptive infrastructure that improves over time through monitoring and retraining, rather than static tools [3].

How to Reposition AI Products Around Trusted Outcomes

Why Feature-Focused Messaging Fails for AI

In the SaaS era, feature-focused messaging made sense. Buyers could easily connect a feature like "bulk email" to a clear outcome – faster outreach. But AI complicates that equation. When terms like "natural language processing" or "machine learning models" are thrown around, they don’t automatically translate into trust or confidence for buyers trying to delegate tasks.

Here’s the reality: 96% of C-suite leaders believe AI will boost productivity, yet 77% of employees say their workloads have increased instead of decreased [12]. Why? The messaging often highlights what AI can do, but not what it enables humans to stop doing.

"2026 is the year we stop asking ‘where does the AI button go?’ and start asking ‘what work can we actually take off someone’s plate?’" – Yulia Lápicus, Product Designer [11]

Feature lists might showcase capability, but they miss the ultimate question buyers are asking: Can I trust this system to handle the work so I don’t have to worry about it? This gap underscores the need for messaging that focuses on dependable, outcome-driven automation.

How to Message Around Trustworthy Results

To resonate with AI buyers, messaging must shift its focus from features to outcomes. Buyers want confidence that AI can safely handle tasks and free them up for other priorities.

Here are three key adjustments to make AI messaging more effective in 2026:

- Position AI as a coworker, not a tool: The goal is to create trust so deep that customers start referring to your AI as a teammate, even giving it a name. As Jason Lemkin, Founder of SaaStr, puts it:

"The test is simple: Would your customers miss your AI agent if it disappeared tomorrow? Not ‘would they notice’ – would they actually miss it? Would they feel like they lost a teammate?" [5]

- Clearly define safety tiers: Move beyond vague promises of "AI-powered automation." Be explicit about what tasks are fully automated, what requires human oversight, and what remains manual. Features like "undo", "rollback", and "activity logs" shouldn’t be buried in documentation – they should be front and center [11].

- Shift from per-user pricing to throughput-based value: Instead of selling user licenses, highlight how AI expands capacity. For example, emphasize capabilities like reviewing all contracts instead of just samples, clearing backlogs, or reducing cycle times from weeks to hours [3]. This reframes AI as a tool that eliminates inefficiencies and uncovers gaps teams didn’t even realize existed.

Here’s how traditional SaaS messaging compares to a more outcome-driven approach for AI in 2026:

| Traditional SaaS Messaging | 2026 AI Outcome Messaging |

|---|---|

| "AI-powered insights" | "Audit every transaction instead of sampling 10%" |

| "Faster content generation" | "Remove content review from your team’s plate" |

| "Smart recommendations" | "Proactively flag risks before they become problems" |

| "Productivity boost" | "Compress 3-week cycles into 3-day cycles" |

The companies thriving in 2026 aren’t leading with technical jargon or model specs. Instead, they focus on the specific tasks buyers can stop worrying about and the safeguards that make delegation trustworthy.

How to Use the Updated JTBD Framework in Your GTM Strategy

To adapt the Jobs-to-be-Done (JTBD) framework for AI, it’s essential to weave trust and risk considerations into your go-to-market (GTM) strategy.

How to Research Buyer Concerns About Delegation and Trust

Traditional JTBD interviews often focus on the features buyers want. But in 2026, that approach misses the mark. Instead, conduct "struggling moment" interviews to uncover the specific worries – those nagging "what ifs" – that stop buyers from trusting AI with critical tasks [14]. Ask them to recount a time they hesitated to delegate a task to AI or had to step in to fix an error.

The key is identifying the precise moment their previous approach failed. For instance, in healthcare, 86% of new product launches fail – not because the technology doesn’t work, but because they miss the real customer need [14].

"Trust is not built when everything works. Trust is built when the AI is wrong, the data is messy, the user is in a hurry, and the product still feels safe to use." – Fentrex Solutions [13]

Focus your research on the five properties of trust:

- Predictability: Can users anticipate the AI’s actions?

- Legibility: Do they understand why it acted the way it did?

- Controllability: Can they guide or steer the AI?

- Reversibility: Can they undo mistakes?

- Boundaries: Are the AI’s limits clear?

Ask buyers to share examples of when these factors mattered most. For high-stakes decisions, explore whether they prefer tangible evidence, like source citations, over abstract confidence scores that might create false certainty.

Your research should also map out the verification ladder – the level of oversight buyers need based on the risk involved. For low-risk tasks, a quick review may suffice, but high-stakes decisions might require more rigorous steps, like multi-layered confirmation or dual control [13]. When discussing workflows, pay attention to when buyers prefer AI to "recommend, not act" versus when they’re comfortable with full automation.

These insights will help you segment markets by their tolerance for risk.

How to Segment Markets by Risk Tolerance

Once you understand trust issues, you can divide your market based on risk tolerance and the impact of decisions. Segment markets by examining what decision the AI replaces, who currently owns it, and the cost of errors [16].

"Starting with a ‘use case’ in AI is like building a house without checking the foundation. It focuses attention on what the system does, not what it decides." – Kristi Pihl, Systems & Spines [16]

Use two dimensions to map your market:

- How contested the logic is – Do stakeholders agree on what "good" looks like?

- How costly a failure would be – Could the impact be regulatory, reputational, or financial?

This creates three clear segments:

- Standardized logic with bounded error: Tasks like fraud detection or inventory optimization, where statistical AI excels.

- Contested logic with high failure cost: Areas like strategic capital allocation or legal review, where humans need to stay in control while AI provides analysis.

- Exploratory synthesis with low consequence: Functions like content ideation or early-stage research, where generative AI can operate with minimal oversight [16].

Stripe Radar is a great example. It works because the decision – approving or blocking a transaction – is straightforward, the logic is standardized across merchants, and any errors escalate to human review [16]. On the flip side, AI strategy copilots often stumble because the decisions they influence are vague, the logic is debated among leadership, and failures may only show up much later [16].

Evaluate each segment by looking at data authority (is the AI working with reliable data?) and integration readiness (is the AI seamlessly embedded into workflows?). The EU AI Act’s tiers – Unacceptable, High, Limited, Minimal – can also guide you in categorizing features by regulatory risk and determining the level of oversight required [15].

How to Build Roadmaps That Expand Trusted Boundaries

Think of your roadmap as a plan to reduce buyer anxiety while proving your AI’s ability to handle increasingly complex tasks [16]. It’s about systematically expanding trust.

Start by evaluating each potential feature based on two factors: how contested the logic is and how costly failure would be [16]. Focus on features where the logic is clear, failure modes are manageable, and users can easily reverse actions. For example, Aisera helped Dartmouth autonomously resolve 86% of IT support requests in early 2026, saving over $1 million annually, because IT support follows standardized logic with minimal risk [15].

Incorporate an "undo" feature with a clear version history for every automated action. If undoing a mistake is difficult or expensive, users will hesitate to delegate tasks to the AI [13]. For high-stakes actions, like data edits, design the AI to propose changes for human approval rather than making silent adjustments [13].

"In AI, PMF is something you rent, not something you own. That means roadmaps must assume PMF erosion and, in some cases, deliberately cause it to happen to stay ahead." – Karan Mehandru, Managing Director, Madrona [4]

Use Outcome-Driven Innovation to identify where customer outcomes are most important but satisfaction (or trust) is lowest [14]. Before building new features, audit five key dependencies:

- Data authority

- Integration into workflows

- Enablement cost

- Logic alignment across stakeholders

- Decision legitimacy

Also, test failure paths by asking: Can any damage be contained, or will it cascade? Is there always a human available to catch errors before they cause harm? Each new capability should move your product up the autonomy ladder – from assistive (human controls the process) to task-agent (human triggers or reviews) to workflow-agent (human sets goals) – with governance scaling accordingly [15].

Conclusion: How the Updated JTBD Framework Gives AI Companies an Edge

The traditional Jobs-to-be-Done (JTBD) framework worked well for SaaS because buyers were primarily looking for tools. But by 2026, AI buyers are seeking outcomes. They want confidence that AI can deliver reliable results without introducing more complexity. Companies that adapt JTBD to emphasize delegation, trust, verification, acceptable error rates, and clear operating boundaries gain a competitive edge that’s tough to duplicate.

This shift in buyer expectations represents a turning point for AI companies.

"If you’re building an AI company, PMF is something you rent, not something you own." – Karan Mehandru, Managing Director, Madrona [4]

As discussed earlier, high rates of pilot failures and project abandonment plague the industry. The problem isn’t the technology – it’s how it’s positioned. Companies that focus on features or productivity often find themselves stuck in endless pilot programs. On the other hand, those that deliver trusted outcomes within defined boundaries earn renewals and long-term loyalty. The real differentiator in 2026 lies in how seamlessly AI integrates into human workflows [1].

Jason Lemkin, Founder of SaaStr, explains it perfectly:

"Once customers bond with an AI teammate, switching costs go through the roof. They’ve trained it. They trust it. They’re not starting over with your inferior version." [5]

This transition – from tools that complete tasks to AI that drives outcomes – is central to Data-Mania’s approach to refining and modernizing established frameworks.

FAQs

How is JTBD different for AI than for SaaS?

The Jobs to Be Done (JTBD) framework takes on a different dynamic when it comes to AI compared to SaaS. While SaaS primarily aims to help users complete tasks and boost efficiency, AI introduces new priorities: delegation, trust, oversight, acceptable error, and workflow compression. AI buyers are looking for systems that can autonomously manage tasks, making it essential for companies to focus on delivering trusted outcomes and defining clear operational limits – not just showcasing features or task-based assistance.

How do I decide what work to delegate to AI vs. keep human-owned?

To make the right call, think about the nature of the task and how much trust, oversight, and verification it demands. Hand off repetitive, rule-based, or data-intensive tasks to AI – these are areas where it excels with little need for supervision. Keep tasks that require judgment, ethical reasoning, or high-stakes decisions in human hands. The key is to let AI handle what it does best while ensuring humans stay in charge of responsibilities tied to trust and accountability.

What proof should buyers look for to trust an AI’s outputs?

When evaluating AI systems, it’s important to look for evidence of reliable performance. This includes checking for transparent verification methods, error rates within acceptable limits, and the ability to handle delegated tasks responsibly within set boundaries. Prioritize systems that balance autonomous operation with clear oversight, ensuring both dependability and accountability.