In this post we’re looking at one of the biggest generative AI ethics concerns I’ve uncovered in my recent research of large language models (LLMs), that of fairness implications involved in reinforcement learning with human feedback (RLHF).

But first, if you’re completely new to the world of generative AI and LLMs, just know that one of the more fundamental aspects of working with them involves fine-tuning LLMs.

Lucky for all genAI newbies out there, SingleStore has provided a free training on this exact topic!

Free Training: Using Google Vertex AI to Fine-Tune LLM Apps

If you’re a developer, data scientist, or machine learning enthusiast looking to revolutionize your LLM applications… It’s time to stop scrolling and start soaring! 🚀

Join this exclusive training where we’ll unveil the unmatched power of Google Vertex AI!

This isn’t your average tech talk; it’s a transformative experience featuring cutting-edge presentations and jaw-dropping, hands-on coding demonstrations.

Here’s a sneak-peek into what you’ll get:

- Deep Dive into Vertex AI: Grasp the nuts and bolts of Google Vertex AI and discover how to apply its core components to LLM applications.

- Hands-On Coding Experience: Forget the yawn-inducing slide decks; witness and participate in a live coding demo, where the potential of Vertex AI will unfold before your eyes.

- Optimization Masterclass: Uncover the secrets to fine-tuning LLM applications. You’ll walk away with actionable techniques and tools that make a difference.

- Insider Insights: Exclusive tips and best practices from experts who’ve been there, done that, and are now willing to share their blueprint for success.

The best part? Whether you’re just starting out in machine learning or you’re an experienced developer, this event has something incredibly valuable for everyone.

Spots are filling up faster than you can say “machine learning”!

Click the link below to reserve your seat and catapult your journey into leveraging Google Vertex AI for LLM applications.

👉 Sign Up Here 👈

My Generative AI Ethics Concerns Regarding RLHF

Yesterday I was learning more about reinforcement learning with human feedback (RLHF), which is a method for fine-tuning LLMs in order to minimize the chance the model will produce “toxic” or “harmful” content. Within that learning, I found myself questioning the generative AI ethics around the process itself.

I’m going to share my learnings of the process below, but I want to raise one point first.

Hey, generative AI outputs aren’t perfect, but their builders have their hearts in the right place when it comes to ethical issues, and I see that generally reflected in the model outputs I get on a near daily basis.

I’ve used generative AI applications pretty darn heavily over the last 5 months… and I am incredibly impressed by the design engineers’ and product peoples’ ability to have launched products that are more or less producing unbiased and harmless content. Of course, there are exceptions, which I will discuss in later blog posts and in my upcoming books and courses, but for now… let me just say: Hey, generative AI outputs aren’t perfect, but their builders have their hearts in the right place when it comes to ethical issues, and I see that generally reflected in the model outputs I get on a near daily basis.

Here’s the process that’s used to collect and prepare human feedback for use in fine-tuning LLMs.

Fine-Tuning LLMs with Human Feedback Process

- Choose an initial model.

- Use a prompt dataset to generate multiple model completions.

- Establish alignment criteria for the model.

- Have humans rank the model’s output based on this criteria.

- Gather all human feedback.

- Average and distribute feedback across multiple labelers for a balanced view.

- Feed that into the LLM to fine-tune its output so that they more closely align with human values.

Hairy Generative AI Ethics Concerns with Respect to RLHF

Gen AI companies say that they are selecting human labelers from a diverse pool, but… are they addressing their own personal biases in that selection process?

It’s difficult to form a consensus when belief systems—religious, political, or otherwise—often conflict, with completely irreconcilable differences.

Often due to differing religious beliefs, what is normal in one country, is completely illegal in others… (say, for example, women showing their hair in public).

No one can really come in deem one side correct, and the other wrong. People and societies are free to be who they are and do what they want to do. Irreconcilable differences like this abound!

If you’re building a technology that has the potential to elevate or destroy societies, opinions of people from all sides should be represented equally in the logic and reasoning outputs that these technologies are constructed to generate.

With AI set to revolutionize various sectors, it’s crucial to include diverse perspectives in its development to avoid perpetuating unfairness.

Early intervention is essential for a future that benefits everyone – but I do not recall myself or anyone that I personally know getting to have a seat at the decision-making table. Considering that these technologies are in the process of upending the digital world in irrevocable ways, and that these changes will impact the lives of our children and generations forth, the fact that the voices of everyday people, like me and you, are not at all considered … it doesn’t seem like right or fair generative AI ethics, IMHO.

Early intervention is essential for a future that benefits everyone – but I do not recall myself or anyone that I personally know getting to have a seat at the decision-making table. Considering that these technologies are in the process of upending the digital world in irrevocable ways, and that these changes will impact the lives of our children and generations forth, the fact that the voices of everyday people, like me and you, are not at all considered … it doesn’t seem like right or fair generative AI ethics, IMHO.

Wanna learn from the course too? Be my guest! Generative AI with LLMs class on Coursera…

Pro-tip: If you like this type of training, consider checking out other free AI app development trainings we are offering here, here, here, here, here, here, and here.

I hope you enjoyed this post on generative AI ethics, and I’d love to see you inside our free newsletter community.

Yours Truly,

Lillian Pierson

PS. If you liked this post, please consider sending it to a friend!

Disclaimer: This post may include sponsored content or affiliate links and I may possibly earn a small commission if you purchase something after clicking the link. Thank you for supporting small business ♥️.

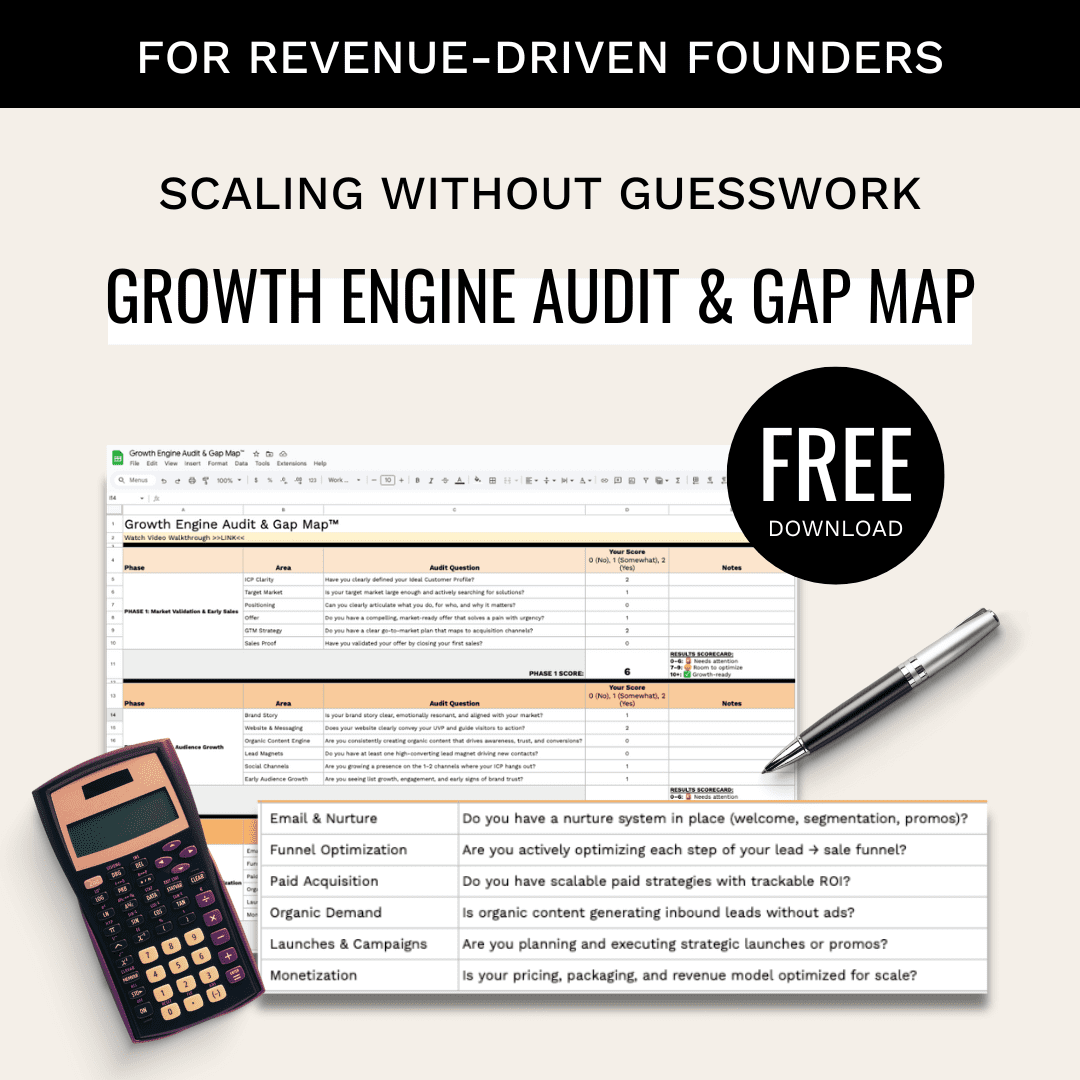

Building a B2B startup growth engine? See how Lillian Pierson works as a fractional CMO for tech startups navigating GTM, AI, and scale.