In today’s rapidly evolving tech landscape, the fusion of AI with cloud computing is reshaping how we approach complex problems and solutions. Among the most significant advancements in this realm is the integration of Large Language Models (LLMs) with cloud infrastructure. This represents a pioneering move that is significantly enhancing AI’s capabilities. At the heart of this breakthrough lies AWS Bedrock – a powerful tool that is pivotal in harmonizing AI in the cloud.

This blog post delves into the critical role AWS Bedrock plays in elevating LLM integration. It offers a glimpse into a world where the boundaries of AI’s potential are continually expanding. As we navigate through the intricacies of this integration, we also invite you to join an enlightening learning experience – a free training session that illuminates the path for aspiring and seasoned professionals in AI in the cloud domain.

The Evolution of AI in the Cloud

The journey of AI in the cloud has been nothing short of revolutionary. From its nascent stages, cloud computing has offered a fertile ground for AI technologies to grow and flourish. Initially, the cloud served as a mere repository for data and a platform for basic computing tasks. However, as technology evolved, so did the capabilities of cloud platforms, transforming them into powerful engines capable of processing and analyzing vast amounts of data in real time.

This evolution paved the way for the integration of sophisticated AI models, particularly LLMs, into cloud infrastructure.

The integration of AI and cloud computing has unearthed new possibilities, allowing for more complex, scalable, and efficient AI applications. This transformative journey has not only democratized access to cutting-edge AI technologies but has also catalyzed a paradigm shift in how we view and utilize AI in the cloud.

Today, cloud platforms are not just hosting environments for AI; they are active participants in AI’s learning and evolution, offering unprecedented scalability, flexibility, and computational power.

The emergence of AWS Bedrock as a key player in this domain marks a significant milestone in this ongoing evolution. It represents a leap forward in how we harness the full potential of AI in the cloud by providing the tools and infrastructure necessary for seamless integration and deployment of advanced AI models.

As we delve deeper into AWS Bedrock’s role in this transformative era, it’s crucial to understand that the journey of AI in the cloud is an ever-evolving narrative; One that is continuously redefining the boundaries of what’s possible in the world of technology.

Understanding AWS Bedrock and Its Role in AI

AWS Bedrock stands at the forefront of technologies that bridge AI with cloud computing, a pivotal development in the field of AI in the cloud. As a comprehensive suite within Amazon Web Services (AWS), Bedrock is designed specifically for the deployment and management of LLMs. It provides an integrated environment that simplifies the complexities associated with LLMs, making it more accessible for developers and businesses alike.

The primary role of AWS Bedrock in AI is to provide a robust and scalable infrastructure that supports the integration and execution of LLMs. This is crucial, considering the enormous computational resources that are required by these models. Bedrock’s infrastructure is tailored to handle large volumes of data and complex computational processes, ensuring that LLMs function efficiently and effectively within the cloud environment.

Furthermore, AWS Bedrock addresses some of the most pressing challenges in AI deployment, including data privacy, model training, and resource optimization. It offers tools and services that ensure data used in LLMs is handled securely in order to maintain confidentiality and compliance with data protection regulations. This aspect is particularly vital for businesses that leverage AI in the cloud for sensitive applications.

Another key feature of AWS Bedrock is its ability to facilitate the scaling of AI applications. As the demand for AI-powered solutions grows, the ability to scale these solutions efficiently becomes increasingly important. AWS Bedrock enables users to scale their LLM applications seamlessly, adapting to varying workloads without compromising performance or security.

By providing a streamlined platform for deploying and managing LLMs, AWS Bedrock is not just enhancing the integration of AI in the cloud; it’s revolutionizing the way we develop, deploy, and interact with AI applications. It represents a significant leap in making advanced AI technologies more accessible and manageable, thus further democratizing the power of AI in the cloud.

Enhancing LLM Integration with AWS Bedrock

The integration of LLMs into the cloud has been a game-changer in the realm of AI. AWS Bedrock significantly enhances this integration, making AI in the cloud not just a possibility but a highly efficient and scalable reality.

One of the most notable contributions of AWS Bedrock to LLM integration is its ability to simplify complex processes. Typically, deploying LLMs in the cloud can be a daunting task due to their complexity and the extensive computational resources they require. AWS Bedrock streamlines this process by providing a user-friendly interface and tools that make it easier for developers to deploy, manage, and scale LLMs in the cloud environment.

Another critical aspect of AWS Bedrock is its optimization of resource utilization. LLMs are known for their intensive use of processing power and memory. AWS Bedrock addresses this by offering optimized cloud resources specifically designed for AI workloads. This means more efficient processing, reduced latency, and lower costs, all of which are essential for effective AI applications in the cloud.

AWS Bedrock also plays a significant role in facilitating real-time data processing and analytics, which is of course a cornerstone of effective LLM applications. This real-time capability allows businesses to harness the full potential of AI in the cloud, enabling them to make quicker, more informed decisions based on the insights generated by LLMs.

Moreover, AWS Bedrock provides robust security features, ensuring that the integration of LLMs into the cloud is secure and compliant with various data protection standards. This is particularly important given the sensitive nature of the data that’s often processed by AI applications.

In essence, AWS Bedrock not only simplifies the integration of LLMs into the cloud but also elevates the overall capabilities of AI in the cloud. It allows businesses and developers to harness the power of LLMs with greater ease, efficiency, and security, thus unlocking new possibilities in AI-driven solutions and applications.

Overcoming Challenges in AI Deployment

Deploying AI in the cloud comes with its unique set of challenges, from ensuring efficient resource utilization to maintaining data security and privacy. AWS Bedrock is instrumental in addressing these challenges, further solidifying its role as a crucial tool for AI deployment in cloud environments.

One of the primary challenges in deploying AI, especially LLMs, is the need for high computational power. These models process vast amounts of data, requiring significant computational resources. AWS Bedrock tackles this by providing scalable cloud resources that can be adjusted based on the demands of the AI application. This scalability ensures that AI in the cloud is not only feasible but also efficient, allowing for the handling of large-scale AI tasks without a compromise in performance.

Data privacy and security are other critical concerns in AI deployment. With the increasing emphasis on data protection regulations, it’s paramount to ensure that AI applications comply with these standards. AWS Bedrock offers robust security features, including encryption and compliance tools, making it easier for organizations to deploy AI in the cloud while adhering to stringent data protection laws.

Another challenge lies in the integration of AI with existing cloud infrastructure. AWS Bedrock simplifies this process through seamless integration tools, allowing developers to easily integrate LLMs with existing cloud services and applications. This ease of integration accelerates the deployment process and reduces the complexities typically associated with such integrations.

Finally, the cost of deploying and maintaining AI applications in the cloud can be prohibitive. AWS Bedrock addresses this by offering cost-effective solutions that optimize resource utilization, thereby reducing overall expenses. This cost efficiency is crucial for businesses that are looking to leverage AI in the cloud without incurring exorbitant costs.

Future of AI in the Cloud with AWS Bedrock

As we look towards the future, the integration of AI in the cloud is poised to become even more pivotal in driving innovation and technological advancement. AWS Bedrock is at the forefront of this evolution and it’s set to play a key role in shaping the landscape of AI applications and deployments.

The potential for AI in the cloud is vast, with AWS Bedrock leading the charge in unlocking new capabilities and applications. One of the future trends we can anticipate is the increasing use of AI for more complex, real-time decision-making processes. With its robust infrastructure, AWS Bedrock will enable AI systems to process and analyze data at unprecedented speeds, making real-time analytics and responses a reality in various industries.

Another exciting prospect is the democratization of AI. AWS Bedrock lowers the barrier to entry for businesses and developers wanting to leverage AI in the cloud. This accessibility means that more organizations, regardless of their size or technical prowess, can harness the power of advanced AI technologies to innovate and compete in the market.

Furthermore, we can expect to see advancements in AI’s self-learning capabilities. AWS Bedrock’s scalable and flexible environment provides the ideal platform for the development of more sophisticated AI models that can learn and adapt in real-time, continually improving their performance and accuracy.

The integration of AI with other emerging technologies is another area of potential growth. AWS Bedrock’s versatile and integrative nature will facilitate the convergence of AI with technologies like IoT, blockchain, and more, leading to the creation of groundbreaking solutions and applications.

In essence, the future of AI in the cloud is bright and full of possibilities, with AWS Bedrock serving as a catalyst for innovation and growth. As we continue to explore and expand the boundaries of what AI can achieve, AWS Bedrock will undoubtedly be a key player in driving these advancements, making AI more powerful, accessible, and impactful than ever before.

Conclusion

As we have explored in this blog, the integration of AI in the cloud is a dynamic and rapidly evolving field, with AWS Bedrock playing a crucial role in shaping its future. The advancements and capabilities brought forth by AWS Bedrock are not just enhancing the efficiency and scalability of AI applications but are also paving the way for new innovations and opportunities in the realm of AI in the cloud.

The synergy between AI and cloud computing, facilitated by AWS Bedrock, is more than just a technological advancement; it’s a transformative movement that is redefining the limits of what AI can achieve. From simplifying complex deployments to ensuring security and scalability, AWS Bedrock stands as a testament to the power and potential of AI in the cloud.

As we stand on the brink of this exciting new era, the opportunity to be a part of this transformation is within your grasp. Whether you’re a developer, a data professional, or simply an enthusiast of AI and cloud technologies, now is the time to dive in and explore the endless possibilities that AWS Bedrock offers.

Join Our Free Training Session

To further your understanding and skills in this groundbreaking field, we invite you to join our free training session on “Using AWS Bedrock & LangChain for Private LLM App Dev.”

This session will not only deepen your knowledge of AWS Bedrock and its applications but also provide you with practical insights into deploying and managing AI in the cloud.

Don’t miss this opportunity to be at the forefront of AI innovation. Click here to register for the free training and embark on a journey that will transform your understanding of AI in the cloud and open doors to new, exciting opportunities.

Pro-tip: If you like this training, consider checking out other free AI app development trainings we are offering here, here, here, here, here, here, here, here,here, and here.

🤍 A creative collaboration with SingleStore 🤍

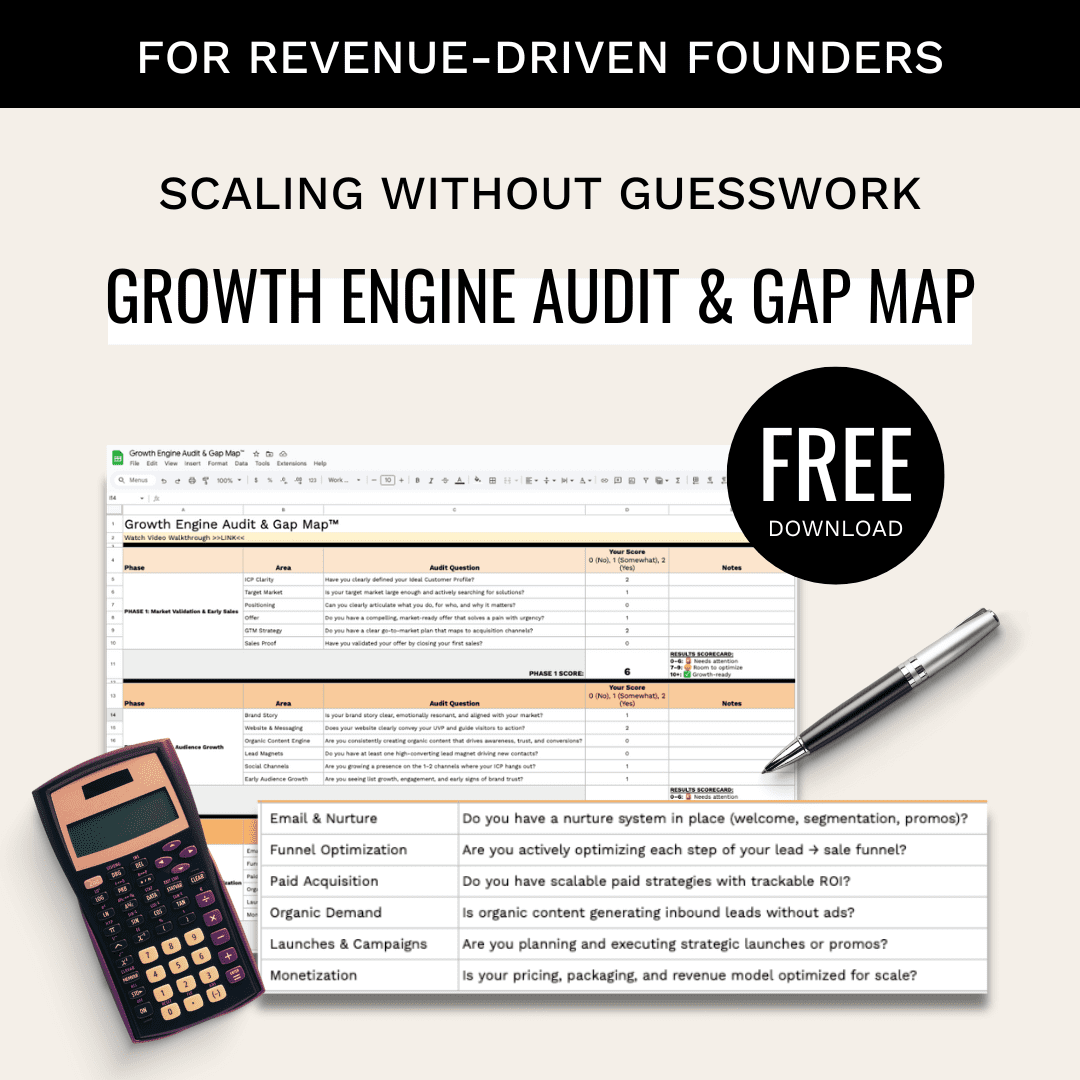

Building a B2B startup growth engine? See how Lillian Pierson works as a fractional CMO for tech startups navigating GTM, AI, and scale.